You can translate the content of this page by selecting a language in the select box.

Azure AI Fundamentals AI-900 Exam Preparation: Azure AI 900 is an opportunity to demonstrate knowledge of common ML and AI workloads and how to implement them on Azure. This exam is intended for candidates with both technical and non-technical backgrounds. Data science and software engineering experience are not required; however, some general programming knowledge or experience would be beneficial.

Azure AI Fundamentals can be used to prepare for other Azure role-based certifications like Azure Data Scientist Associate or Azure AI Engineer Associate, but it’s not a prerequisite for any of them.

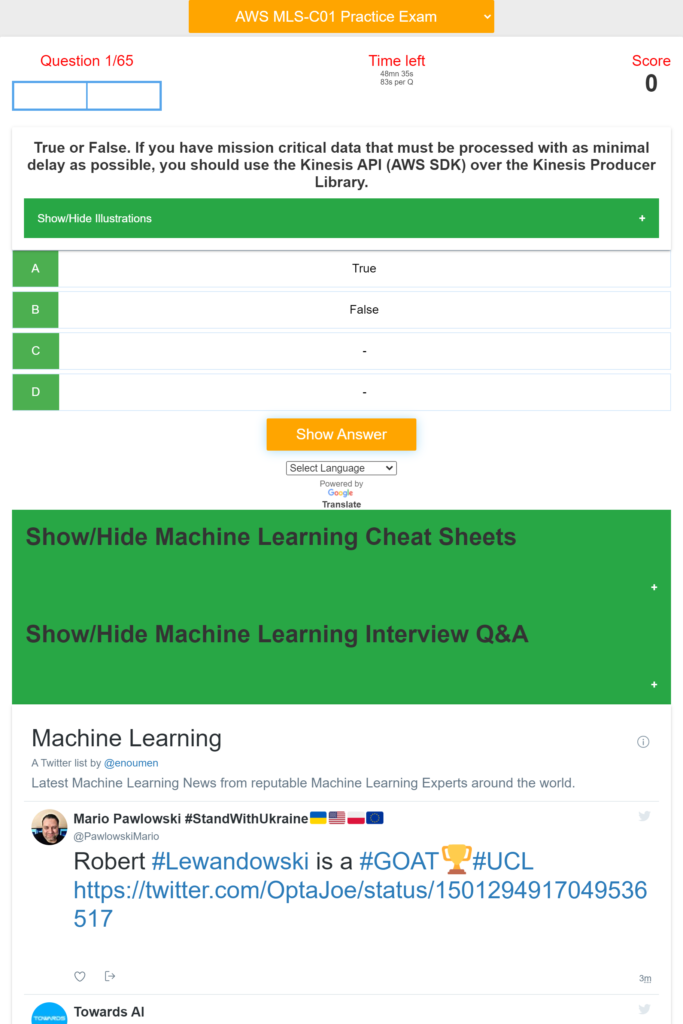

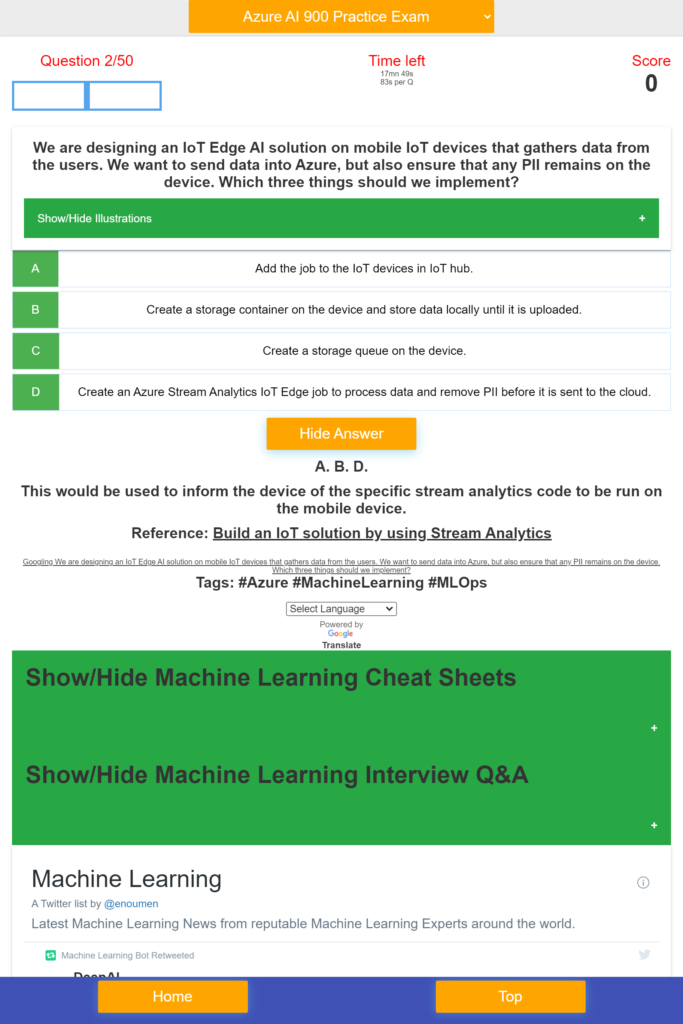

This Azure AI Fundamentals AI-900 Exam Preparation App provides Basics and Advanced Machine Learning Quizzes and Practice Exams on Azure, Azure Machine Learning Job Interviews Questions and Answers, Machine Learning Cheat Sheets.

Download Azure AI 900 on Windows10/11

Azure AI Fundamentals AI-900 Exam Preparation App Features:

– Azure AI-900 Questions and Detailed Answers and References

– Machine Learning Basics Questions and Answers

– Machine Learning Advanced Questions and Answers

– NLP and Computer Vision Questions and Answers

– Scorecard

– Countdown timer

– Machine Learning Cheat Sheets

– Machine Learning Interview Questions and Answers

– Machine Learning Latest News

This Azure AI Fundamentals AI-900 Exam Prep App covers:

- ML implementation and Operations,

- Describe Artificial Intelligence workloads and considerations,

- Describe fundamental principles of machine learning on Azure,

- Describe features of computer vision workloads on Azure,

- Describe features of Natural Language Processing (NLP) workloads on Azure ,

- Describe features of conversational AI workloads on Azure,

- QnA Maker service, Language Understanding service (LUIS), Speech service, Translator Text service, Form Recognizer service, Face service, Custom Vision service, Computer Vision service, facial detection, facial recognition, and facial analysis solutions, optical character recognition solutions, object detection solutions, image classification solutions, azure Machine Learning designer, automated ML UI, conversational AI workloads, anomaly detection workloads, forecasting workloads identify features of anomaly detection work, Kafka, SQl, NoSQL, Python, linear regression, logistic regression, Sampling, dataset, statistical interaction, selection bias, non-Gaussian distribution, bias-variance trade-off, Normal Distribution, correlation and covariance, Point Estimates and Confidence Interval, A/B Testing, p-value, statistical power of sensitivity, over-fitting and under-fitting, regularization, Law of Large Numbers, Confounding Variables, Survivorship Bias, univariate, bivariate and multivariate, Resampling, ROC curve, TF/IDF vectorization, Cluster Sampling, etc.

- This App can help you:

- – Identify features of common AI workloads

- – identify prediction/forecasting workloads

- – identify features of anomaly detection workloads

- – identify computer vision workloads

- – identify natural language processing or knowledge mining workloads

- – identify conversational AI workloads

- – Identify guiding principles for responsible AI

- – describe considerations for fairness in an AI solution

- – describe considerations for reliability and safety in an AI solution

- – describe considerations for privacy and security in an AI solution

- – describe considerations for inclusiveness in an AI solution

- – describe considerations for transparency in an AI solution

- – describe considerations for accountability in an AI solution

- – Identify common types of computer vision solution:

- – Identify Azure tools and services for computer vision tasks

- – identify features and uses for key phrase extraction

- – identify features and uses for entity recognition

- – identify features and uses for sentiment analysis

- – identify features and uses for language modeling

- – identify features and uses for speech recognition and synthesis

- – identify features and uses for translation

- – identify capabilities of the Text Analytics service

- – identify capabilities of the Language Understanding service (LUIS)

- – etc.

Download Azure AI 900 on Windows10/11

Azure AI Fundamentals Breaking News – Azure AI Fundamentals Certifications Testimonials

- AZ-900 Examby /u/mikkelmr135 (Microsoft Azure) on April 25, 2024 at 9:46 am

Hi there! I have a quick question about the AZ-900 exam and im finding SO MANY conflicting answers to how much you need to study and how hard the exam actually is. I have gone through all the microsoft learn material and can get above 90% consistently in the practice assessments. Would that be enough or do you guys reccomend studying more via youtube and such? 🙂 submitted by /u/mikkelmr135 [link] [comments]

- Authenticator Registration prompt when excludedby /u/Suited043 (Microsoft Azure) on April 25, 2024 at 9:36 am

Hi all, Recently I started at a new job and I've been requested to roll out company wide MFA. The request was to exclude trusted locations from the policy. I know this isn't the Zero Trust way but I've gotta do it. However, we want to roll out MFA with a grace period for people to opt-in during the grace period. But about half of our users work in-office wich will be registred as a trusted location. This will be excluded, but the one thing I'm not completely statisfied with the fact that when excluded from the MFA due to trusted location users do not get the prompt to register their authenticator. Is there a way to force people to register their account with MFA regardless if they are excluded or not? submitted by /u/Suited043 [link] [comments]

- Time Sync for > Using AzureAD Group in Builtin Administrators groupby /u/Freddo_aka_5ilv4 (Microsoft Azure) on April 25, 2024 at 8:11 am

Hi all, Recently I try to manage local administrators with using an inTune policies to replace local administrators. We add the builtin administrator account and a SID Goup from AzureAD. Honestly is working well, but... with all Azure / inTune Sync... just a pain to "wait" right ? I would know if someone already use this method, and if you know : How many time it take ? How I can "foce" this syncing Thanks mates ! submitted by /u/Freddo_aka_5ilv4 [link] [comments]

- Admin consent - PnP Management Shell - a LOT of permissions requestedby /u/dkarlq (Microsoft Azure) on April 25, 2024 at 8:01 am

Hi, I got a admin consent request on an application that I would like a second opinion on. It requires a LOT of permissions, and the user who requested it wants it to deploy some SharePoint templates. When googling it looks like a OpenSource-project that Microsoft refers to (but do not manage). Any takes on this? AppID: 31359c7f-bd7e-475c-86db-fdb8c937548e Permissions: https://preview.redd.it/je0sik021lwc1.png?width=328&format=png&auto=webp&s=68db8a8a42587e88ede9a3202fcb2f9da39dc5fd submitted by /u/dkarlq [link] [comments]

- P2S VPN Gateway for Microsoft Entra ID Authenticationby /u/rijoskill (Microsoft Azure) on April 25, 2024 at 5:27 am

submitted by /u/rijoskill [link] [comments]

- File Sync for DRby /u/superuseradmin1 (Microsoft Azure) on April 25, 2024 at 2:35 am

I'm looking to create a DR solution to protect some on prem file shares we have at 3 different locations. All 3 locations have one file server on site and specific to just the site. My goal is to get the users up and running with their files in the share if the server goes down. I have a storage account setup and have 3 file shares inside that. I plan on naming the file shares to the name of the specific site. For one of them I plan on testing out with, I have file sync setup with a sync group. I'm at the point of installing the file sync agent on the on prem server and testing out a sync to the Azure storage with it. My initial thought is to setup a Windows server in Azure with a disk big enough to handle the on prem file share size, and then sync up between the Azure Windows server and the on prem one. Then if on prem goes down, change group policy and send them to the server in Azure. My question is if this is the best way to do this or not. I think this should work fine, but is creating a new server in Azure for each site going overboard? Is there a better way to get them to their synced file share like direct to the storage or such? Less servers to manage is always a good thing if possible. My end goal in the future though is to have them just live and go to the Azure file share. This could be a bit down the road though. Thanks much Edit: I should add that I am very new to Azure so far and our on prem file share uses NTFS permissions and security groups to access certain folders and files on the share. submitted by /u/superuseradmin1 [link] [comments]

- Can't access isolated VM via Bastionby /u/_benwa (Microsoft Azure) on April 25, 2024 at 2:13 am

I have an isolated vnet that has a Bastion host in it to access a recovery test Domain Controller. Unfortunately, I can't seem to actually connect to it. The Bastion subnet doesn't have an NSG and the VM subnet has a rule allowing RDP for the Bastion subnet. Any ideas on what is wrong? submitted by /u/_benwa [link] [comments]

- Using Azure Functions to handle connection to Cosmos DB and websiteby /u/dot_equals (Microsoft Azure) on April 25, 2024 at 1:08 am

-SOLVED- Hi, so I am having some issues with getting my azure function to safely and securely withdraw a key or secret from my vault. At the moment I am just trying to figure out what type of auth_level I need and how to manage secure connections. Do I need to set up some sort certificate to approve the hand shake? I incorrectly assumed that all the authentication would be done with in azure when I set up the IAM giving the function read write access to the vault. I have been reading a lot of documentation and asking chatGPT but Alas here I am. Please let me know what kind of information I need to send in order to help gain a decent answer. submitted by /u/dot_equals [link] [comments]

- 673 on my AZ-305by /u/icebreaker374 (Microsoft Azure Certifications) on April 24, 2024 at 11:23 pm

I kinda took the AZ-305 on a whim after having passed the 9/7/500 and 104 in the last week with minimal studying. SO much more SQL and DB related stuff than I was expecting. I feel mildly discouraged by the fact that I got that close. What'd you all use for prep? submitted by /u/icebreaker374 [link] [comments]

- Linked Backend with Static Web Appby /u/jayc12345678901 (Microsoft Azure) on April 24, 2024 at 11:13 pm

I’ve been running into kind of a strange issue with Static Web Apps and App service. I have my FE deployed with static web apps and the static web app behind a private endpoint. I have a separate app service that I am deploying my API on. Using the SWA “API” blade I am able to link my app service to the static web app. In theory this should enable me to use domain.com/api as the url of my api, without using the autogenerated domain name given to my app service directly. However, I keep getting a 403 when navigating to domain.com/api whereas I expect to see json response for that endpoint. I have no auth required for my app service and both the static web app and app service are working on their own. Just confused about this 403. submitted by /u/jayc12345678901 [link] [comments]

- Help with AzureML: Deleted a compute instance that was running with disk full (and costs)by /u/augustcs (Microsoft Azure) on April 24, 2024 at 10:35 pm

I was using a compute instance to train a model. At least I tried. I changed something (another image) in my Docker environment and then when running the job, I got the error that the compute's disk was full and it was in an unuseable state: "Operating system disk has run out of disk space. Clear at least 5 GB disk space on OS disk (/dev/sda1/ filesystem mounted on /) through the terminal by removing files/folders, and then do sudo reboot. You can check available disk space by running df -h. For more details refer to https://aka.ms/cidiskfull" The compute was still running. However, I couldn't access the terminal (in the notebook section in Studio), where it just said it 'wasn't available', or 'Current terminal is encountering some issues, please switch compute or restart your current compute and retry.' I then was panicking because I do this for a company and they are very strict with the costs, as the compute is still running and I could not stop it and therefore still accruing costs (?). So I deleted the instance. But I don't really know if I have really deleted it, or that it is still running somewhere and we will still have costs. How do I proceed from here? I'm relatively new with this and not that technically versed as you might tell. Thanks in advance! submitted by /u/augustcs [link] [comments]

- A single consumer to read from multiple event hubsby /u/PlusBasket9721 (Microsoft Azure) on April 24, 2024 at 10:02 pm

Hey! I have a enterprise level application with multiple micro-services, some of the background tasks and communication has been handled by Event hubs. The current implementation has the Event hubs with the Kafka layer, but I am exploring the option of removing the Kafka layer and utilizing the Azure SDK itself. I needed help with one thing - Can I have 1 consumer running off one of my micro-services be able to read / consume events from multiple event hubs, using the Azure SDK? I was able to work get this working using the Kafka layer, but unable to find proper examples with the Azure SDK. Thanks in advance. submitted by /u/PlusBasket9721 [link] [comments]

- Migrate/Extend Entra ID to Entra Domain Servicesby /u/theamadelorean (Microsoft Azure) on April 24, 2024 at 9:37 pm

We have a client that is currently utilizing cloud only accounts setup in Entra ID. Their devices are all Entra Joined and authenticate that way. Its about a 350 person organization currently. We are going to try and implement an Azure SMB File share (currently on SPO) but will need a domain environment setup for authentication and security groups. Looking through MS documentation, looks like we'll have to have everyone reset their password moving from Entra ID to Entra DS before we can utilize that. Has anyone gone through this process? I'm not necessarily opposed to setting up on prem AD if that route is less headache but doubt it is. submitted by /u/theamadelorean [link] [comments]

- Azure Certification Pathby /u/DaveC2020 (Microsoft Azure Certifications) on April 24, 2024 at 9:25 pm

I managed to achieve the MS-900 certification last week and already got AZ-900. My next certification path I’m thinking is going for AZ-104 but is it also worth looking into the next certification after MS-900? submitted by /u/DaveC2020 [link] [comments]

- DevOps Migration (Tenant to Tenant)by /u/mattmak22 (Microsoft Azure) on April 24, 2024 at 9:17 pm

Hello, Does anyone have any experience migrating a Microsoft DevOps environment from one Azure tenant to another? I saw there is a way to "bind" DevOps to a different Entra AD environment, is this all there is? I've tried digging around for info and haven't come up with much. Thank you in advance! submitted by /u/mattmak22 [link] [comments]

- Management Groups: Working with business units and environments.by /u/DevManTim (Microsoft Azure) on April 24, 2024 at 9:10 pm

Following the Azure CAF model, a management group tree starts with root, then an intermediary, then platform, landing zones and decom, sandbox, etc. Within the landing zones we have business units, or logical association of subs to an organizational unit. While not illustrated in CAF, you usually would have multiple subscriptions per org unit based on environment. So, taking the diagram below, inside the Corp MG you'd have a dev / tst / prd subscription. The trick and challenge is... how do you apply policy effectively in this structure, when your environments are nested within an org unit? Consider this use case, at an enterprise level we want to enforce a certain set of Azure policies in all production subscriptions. However, because there could be a prod subscription within SAP, Corp and Online... we'd have to apply azure policy to those subscriptions individually, instead of setting at an MG and allowing it to trickle down. Any thoughts? https://preview.redd.it/joab7hmtlhwc1.png?width=725&format=png&auto=webp&s=c75857c04391020b6b878c9725c89d5eff99c675 submitted by /u/DevManTim [link] [comments]

- AZ 104by /u/Dutchy2023 (Microsoft Azure Certifications) on April 24, 2024 at 8:09 pm

Stupid question; Possible to get AZ 104 certificate in 3 weeks around 4-5 hours a day? Just lost my job and I'm done with being a Support Engineer. Looking for something like Junior Cloud Engineer. submitted by /u/Dutchy2023 [link] [comments]

- MC761220 - Adding required endpoints for provisioning cloud PCsby /u/JustOneMoreMile (Microsoft Azure) on April 24, 2024 at 7:10 pm

I have a client that is leveraging cloud PCs in M365. They received a letter saying that they need to ensure hm-iot-in-4-prod-prna01.azure-devices.net is reachable. I've looked all over the 365 tenant and I cannot for the life of me find where to add the exceptions. The ANC health checks are passing, but the client is getting this in an email every day. Summary: Azure network connection checks have failed and is potentially impacting over 1 Azure network connections and blocking the provisioning of new Cloud PCs. submitted by /u/JustOneMoreMile [link] [comments]

- External tables not visible in SQL Poolby /u/Co2Mtl (Microsoft Azure) on April 24, 2024 at 6:28 pm

Hello I'm trying to give access to a user through the SQL pool to the external tables which are in the datalake. But it doesn't work, the user cannot see them in SSMS. He can see the database itself however (Silver) but nothing in the external tables folder which remains empty. We are working with Azure Synapse. Access to the Bronze layer is ok but it's different since it is a SQL Database. Enriched database (Silver) I gave access to his group to the files in the container (Storage/Container in AZ Portal) with Read and Execute permissions. I applied these permissions to the right folder only so nothing at the rool level (even on the container itself, is it correct ?). But I added his group as a Contributor on the storage. Nothing was applied (AFAIK) at the SQL level (GRANT permissions). Any help would be appreciated, thanks ! submitted by /u/Co2Mtl [link] [comments]

- General availability: Application Gateway Web Application Firewall (WAF) inspection limit & size enforcementby Azure service updates on April 24, 2024 at 6:00 pm

Azure’s regional Web Application Firewall (WAF) running on Application Gateway now supports greater control over request body inspection, and maximum size limits for request bodies and file uploads.

Download Azure AI 900 on Windows10/11

A Twitter List by enoumen