You can translate the content of this page by selecting a language in the select box.

The AWS Certified Machine Learning Specialty validates expertise in building, training, tuning, and deploying machine learning (ML) models on AWS.

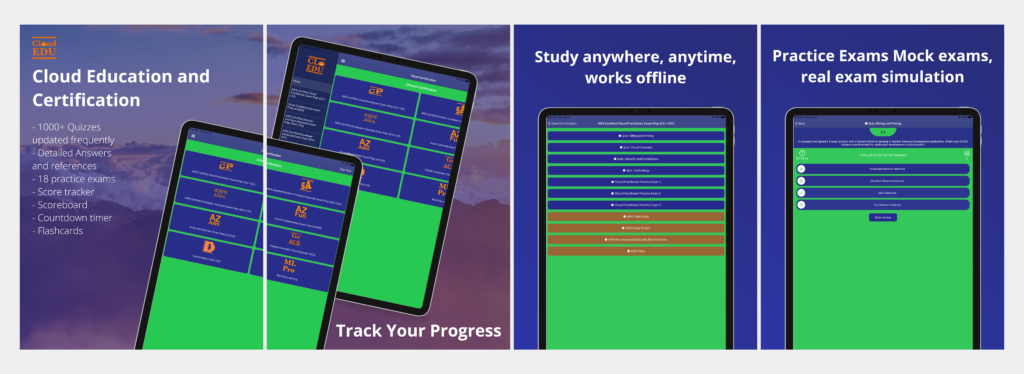

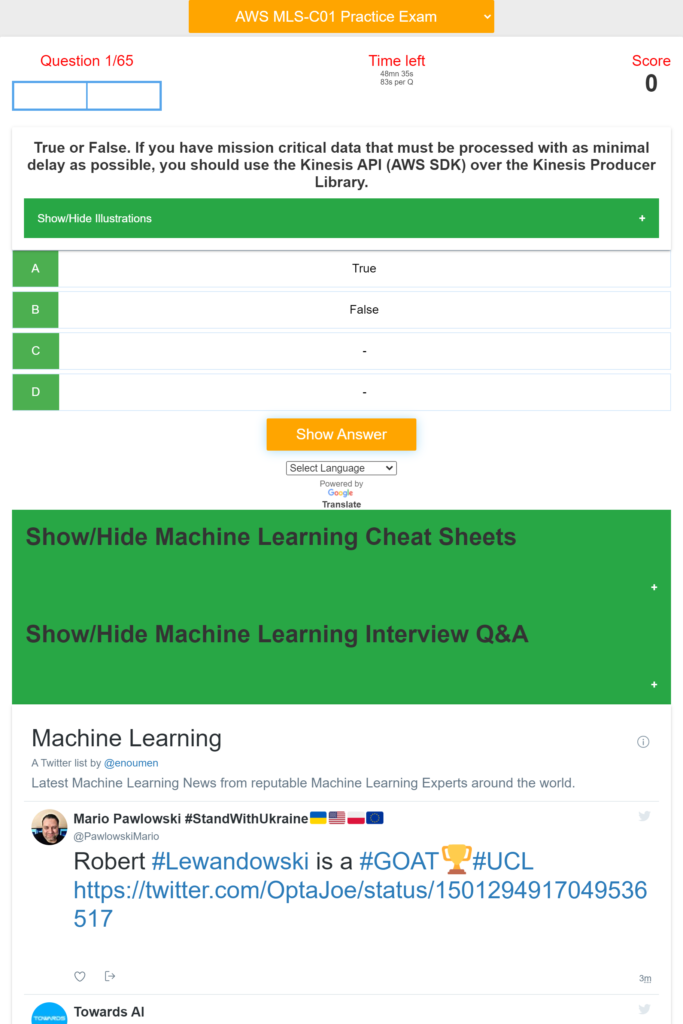

Use this App to learn about Machine Learning on AWS and prepare for the AWS Machine Learning Specialty Certification MLS-C01.

Download AWS machine Learning Specialty Exam Prep App on iOs

Download AWS Machine Learning Specialty Exam Prep App on Android/Web/Amazon

[appbox appstore 1611045854-iphone screenshots]

[appbox microsoftstore 9n8rl80hvm4t-mobile screenshots]

Download AWS machine Learning Specialty Exam Prep App on iOs

Download AWS Machine Learning Specialty Exam Prep App on Android/Web/Amazon

The App provides hundreds of quizzes and practice exam about:

– Machine Learning Operation on AWS

– Modelling

– Data Engineering

– Computer Vision,

– Exploratory Data Analysis,

– ML implementation & Operations

– Machine Learning Basics Questions and Answers

– Machine Learning Advanced Questions and Answers

– Scorecard

– Countdown timer

– Machine Learning Cheat Sheets

– Machine Learning Interview Questions and Answers

– Machine Learning Latest News

The App covers Machine Learning Basics and Advanced topics including: NLP, Computer Vision, Python, linear regression, logistic regression, Sampling, dataset, statistical interaction, selection bias, non-Gaussian distribution, bias-variance trade-off, Normal Distribution, correlation and covariance, Point Estimates and Confidence Interval, A/B Testing, p-value, statistical power of sensitivity, over-fitting and under-fitting, regularization, Law of Large Numbers, Confounding Variables, Survivorship Bias, univariate, bivariate and multivariate, Resampling, ROC curve, TF/IDF vectorization, Cluster Sampling, etc.

Domain 1: Data Engineering

Create data repositories for machine learning.

Identify data sources (e.g., content and location, primary sources such as user data)

Determine storage mediums (e.g., DB, Data Lake, S3, EFS, EBS)

Identify and implement a data ingestion solution.

Data job styles/types (batch load, streaming)

Data ingestion pipelines (Batch-based ML workloads and streaming-based ML workloads), etc.

Domain 2: Exploratory Data Analysis

Sanitize and prepare data for modeling.

Perform feature engineering.

Analyze and visualize data for machine learning.

Domain 3: Modeling

Frame business problems as machine learning problems.

Select the appropriate model(s) for a given machine learning problem.

Train machine learning models.

Perform hyperparameter optimization.

Evaluate machine learning models.

Domain 4: Machine Learning Implementation and Operations

Build machine learning solutions for performance, availability, scalability, resiliency, and fault

tolerance.

Recommend and implement the appropriate machine learning services and features for a given

problem.

Apply basic AWS security practices to machine learning solutions.

Deploy and operationalize machine learning solutions.

Machine Learning Services covered:

Amazon Comprehend

AWS Deep Learning AMIs (DLAMI)

AWS DeepLens

Amazon Forecast

Amazon Fraud Detector

Amazon Lex

Amazon Polly

Amazon Rekognition

Amazon SageMaker

Amazon Textract

Amazon Transcribe

Amazon Translate

Other Services and topics covered are:

Ingestion/Collection

Processing/ETL

Data analysis/visualization

Model training

Model deployment/inference

Operational

AWS ML application services

Language relevant to ML (for example, Python, Java, Scala, R, SQL)

Notebooks and integrated development environments (IDEs),

S3, SageMaker, Kinesis, Lake Formation, Athena, Kibana, Redshift, Textract, EMR, Glue, SageMaker, CSV, JSON, IMG, parquet or databases, Amazon Athena

Amazon EC2, Amazon Elastic Container Registry (Amazon ECR), Amazon Elastic Container Service, Amazon Elastic Kubernetes Service , Amazon Redshift

Important: To succeed with the real exam, do not memorize the answers in this app. It is very important that you understand why a question is right or wrong and the concepts behind it by carefully reading the reference documents in the answers.

Note and disclaimer: We are not affiliated with Microsoft or Azure or Google or Amazon. The questions are put together based on the certification study guide and materials available online. The questions in this app should help you pass the exam but it is not guaranteed. We are not responsible for any exam you did not pass.

Download AWS machine Learning Specialty Exam Prep App on iOs

Download AWS Machine Learning Specialty Exam Prep App on Android/Web/Amazon

- [D] What RL technique can be used to train an LLM on single preference data points, and not pairs?by /u/CatfishJones96 (Machine Learning) on April 18, 2024 at 9:38 am

Both PPO and DPO objectives seem to require a prefered (w) and disprefered (l) response. Can either be used/altered to train/fine-tune an LLM on single preference data points (i.e. where I have only a prefered OR only a disprefered response for each input prompt)? If not, is there any RL technique to accomplish this? submitted by /u/CatfishJones96 [link] [comments]

- [D] Career path for Marketing Managment grad studentby /u/Independent_Main_432 (Machine Learning) on April 18, 2024 at 8:45 am

Hi everyone, in this post I would like to ask for advice from people reading this post on what path I should take. I don't want to write a particularly long text, so I will try to write as little as possible. I am 20 years old, this summer I am graduating from university, Faculty of Management, specialising in marketing. Half a year ago I realised that it's crap, and I don't like it at all, I started looking for something that I really like, I tried several different options (design, sales, e-commerce,) and came to the conclusion that I like programming most of all, and even later tried several languages and stuff, I realised that in programming I'm more interested in AI (Machine Learning, Deep Learning). After a few months of delving into this area, I sort of analysed my situation and made a conclusion for myself - without experience or without PhD or at least engineering education I will never get there. Then I started to look for more related specialities that would be as interesting to me as the AI topic, in general, having made parallels, I came to the conclusion that all kinds of Analysis, data manipulation are very interesting to me. Now I got acquainted with such speciality as Business Analyst, and the first impression I have about it is kind of not bad, and there is even a desire to develop in it. This thread is not about how to become a programmer, but what is the best direction to choose, which will be similar to Machine Learning, to come to it in the future, if I really like it and I don't give up on it after a while. So, my situation now: I study at university 3-4 days a week, 2-3 days a week I work in a warehouse (part-time), I understand that if after graduation I do not get a good job, I will have to work in a warehouse 5/2 for a long time, I'm not very high from this work, and plus I need to get up at 5 am to be at work by 6 o'clock. I am in a foreign country (I am from Ukraine but I have been living in Poland for 2.5 years, I have a good relationship with the language - B2 level, also English B1-B2). So still I wanted to write as short as possible, but it didn't really work out:) I will be glad to see your answers and tips for development in this field And yes, it's worth writing that I don't really have a desire to go to university again, to study there 3.5 years for an engineer (I think it's a very long time, and this diploma may simply become irrelevant). and I don't have much money in general, if I go back to university, it'll be in a year, and that's if I don't give up on it. submitted by /u/Independent_Main_432 [link] [comments]

- [D] I want to train a model that will detect complex tables and extract its content meaningfully. How to do it?by /u/IcyParfait3120 (Machine Learning) on April 18, 2024 at 8:12 am

As I said, I want to train an AI model that will detect complex tables through either PDF or images of the PDF. I want to extract meaningful data from this PDF or image of the table. I am just starting out in ML and don't know much. How should should I go about training the model I just described? Thank You submitted by /u/IcyParfait3120 [link] [comments]

- [R] Here Is How Gorilla Changed The Way LLMs Use Toolsby /u/LesleyFair (Machine Learning) on April 18, 2024 at 6:47 am

LLMs are fundamentally limited. They are built from a constant set of weights. As a result, they store one constant block of information. Vendors are offering more and more plugins that allow their models to use external tools through APIs. This enables models to perform more complex tasks using Google, Python, or translation services. What is changing? Gorilla marks the transition from using a few hard-coded tools to opening LLMs up to use the vast space of cloud-based APIs. If we extrapolate this path into the future, LLMs could become the primary interface to compute infrastructure and the web. Gorialla vs. GPT-4 and Claude This sounds quite lofty. And it is! And surely there is a long way to go before we get there but this week’s paper takes a first exciting step in the right direction. Let’s check it out! Motivation: Why was it still hard for models to use tools? State-of-the-art LLMs such as GPT-4 struggle to generate accurate API calls. This often happens due to their tendency to hallucinate. Further, much of the prior work on tool use in language models has focussed on integrating a small set of well-documented APIs into the model. In essence, the API documentation was just dumped into the prompt and then the model was asked to generate an API call. This approach is limited. Very limited. It is impossible to fit all of the world’s APIs into the model’s context window. So, to eventually integrate a model with millions of tools, a completely different approach is needed. Here is where Gorilla comes in! What is Gorilla? In a sentence, Gorilla is a finetuned LLaMA-based model that writes API calls better than GPT-4 does. Let’s break down their approach to understand what they actually did. How Does It Work? Their approach can be broken down into three steps. First, they constructed a sizable dataset of API calls and their documentation. Then, they used the self-instruct method to simulate a user instructing the model to use these APIs. Last, they finetuned LLaMA on their data and did several interesting experiments to investigate how much a retriever could boost performance. Let’s zoom in on each of the three points to get a better understanding. Their dataset (APIBench) was created by scraping APIs from public model hubs (TorchHub, TensorHub and HuggingFace). After several cleaning and filtering steps, this resulted in a dataset with more than 1700 documented API calls. The second step is where the actual training dataset was built. They used self-instruct to build instruction-API pairs. In plain english, this means they showed each of the documented APIs to GPT-4. Then they asked the model to generate 10 potential real-world use cases that would result in using each of the APIs. Let’s look at an example! If GPT-4 would be presented with the following API call: model = torch.hub.load('huggingface/pytorch-transformers', 'model', 'bert-base-uncased') It would output an instruction such as: “Load an uncased BERT model”. Almost done. Just one more step to go. In the third and final step, they finetuned a LLaMA model on the {instruction, API} pairs. The resulting model outperformed GPT-4, ChatGPT, and base LLaMA by 20%, 10%, and 83%, respectively. Not bad. However, this only refers to using the model in a zero-shot manner. The model did not have any access to additional API documentation. This begs the question: What happens if we integrated a retriever and gave the model model access to these things? The Integration Of Retrievers And Some Criticism APIs change all the time. This is a challenge for any model because the frequency of updates to APIs is likely to outpace any retraining schedule. This makes tool use particularly susceptible to changes in the very APIs that are supposed to be processed. With arguments like this one, the authors drive home the point that retrievers are likely to play an important part in any tool used in LLMs. To approach this challenge, they trained LLaMA with different retrievers. With mixed results. They found that using a so-called oracle retriever, which always provides the correct piece of documentation, greatly boosted performance. That’s not really a surprise if you ask me. However, using standard retrievers such as BM25 and GPT-Index was shown to degrade performance by double-digit percentages. The authors conclude that using a sub-par retriever tends to confuse the model more than it helps. This is where I have to disagree slightly with their otherwise great approach. They say that they only include the top-1 result from the retriever. That makes no sense to me. Everyone who ever worked with information retrieval knows that it is almost impossible to make the retriever return the correct paragraph on the top position. If they had included the top 10 or top 50 results from the retrieval step, the results might look very different. I guess we can’t always get what we want. But I still wonder why they did that. Before we wrap up, let’s end on a positive note! I love the fact that they made their dataset APIBench publicly available. In an increasingly closed-source world, I am always delighted to see such acts of kindness to the community! P.S. If you found this useful, please, share it with a friend or subscribe here ⭕️ for weekly breakdowns of influential papers. submitted by /u/LesleyFair [link] [comments]

- [D] Finding data sets of satellite imagesby /u/MayanthaCry (Machine Learning) on April 18, 2024 at 6:22 am

I am planning to detect illegal crop planting in forests, but it's difficult to find a suitable dataset. Is there any way to overcome this? submitted by /u/MayanthaCry [link] [comments]

- [D], [R], Colocation for servers with 4090 GPUs with purpose to use for ML/AIby /u/jaytea21 (Machine Learning) on April 18, 2024 at 5:58 am

Hello, I'm not sure if this is the right place to post this but I hope it is, if not please feel free to let me know where else this should be posted and I'll post my questions there. With that being said pretty much what the title says but are there any caveats I need to be aware of attempting to ask for rack space at a colo in order to put my servers there? People are doing it obviously but I'm not sure how? Do I just ask for rackspace, put my server(s) in there and be done with it? Do colos not ask questions on what the server has in it? Looking for someone who has experience with putting these kinds of servers into colos so I can get some good advice. Second question is, I have my own open air rigs for all my servers currently but I'm at the limit of the house power to add more so that's why I'm looking into the colo to help expand also to offload what is at my house. I seem to be having a hard time finding a chassis that will allow at least 2 power supplies and can hold at least 5 4090 GPUs (4 would be acceptable as well). Does anyone have experience with chassis that can hold 5 4090s (these are the regular sized ones - not blower cards) including an H12SSL board? I've google'd a bit and I'm finding really nothing that I think would fit what I'm trying to do, so since I'm failing to google what I'm trying to find I figured asking here would be maybe better as I am hoping someone might have a nice chassis or two they could recommend? Thanks in advance! submitted by /u/jaytea21 [link] [comments]

- [D] Analysing 100s of time series to recognise incidents/events via correlationby /u/OmarasaurusRex (Machine Learning) on April 18, 2024 at 4:01 am

Hi Folks, I was able to setup time series forecasting and anomaly detection for metrics that are generated by our numerous and large Kubernetes clusters via prophet and/or statsforecast. The use case that I plan on tackling next is to continuously analyse 100s of time series values and then recognise time-based events via correlation eg: 20 individual alarms of node failure turn into a single event via timeseries correlation. This would potentially allow us to recognise causality with unrelated components as well. Any ideas of how I may be able to achieve this? Please be gentle on the math as Im an SRE playing the role of an ML engineer here 🙂 submitted by /u/OmarasaurusRex [link] [comments]

- [P] Training a VQGAN but GAN loss keeps going upby /u/darthjaja6 (Machine Learning) on April 18, 2024 at 2:02 am

https://preview.redd.it/h13z13eua5vc1.png?width=640&format=png&auto=webp&s=397d5127453b2f4a1d6f6df28fb5fc8a2f2f0cff I think the VQ loss and perceptual loss look normal, but I feel it's hard to understand why discriminator goes towards the completely different direction...anyone has seen similar things before? More details: I'm training the vqgan on imagenet from the paper Taming Transformers for High-Resolution Image Synthesis submitted by /u/darthjaja6 [link] [comments]

- [R] [2404.10667] VASA-1: Lifelike Audio-Driven Talking Faces Generated in Real Timeby /u/s6x (Machine Learning) on April 18, 2024 at 12:41 am

submitted by /u/s6x [link] [comments]

- [N] Feds appoint “AI doomer” to run US AI safety instituteby /u/bregav (Machine Learning) on April 17, 2024 at 10:49 pm

https://arstechnica.com/tech-policy/2024/04/feds-appoint-ai-doomer-to-run-us-ai-safety-institute/ Article intro: Appointed as head of AI safety is Paul Christiano, a former OpenAI researcher who pioneered a foundational AI safety technique called reinforcement learning from human feedback (RLHF), but is also known for predicting that "there's a 50 percent chance AI development could end in 'doom.'" While Christiano's research background is impressive, some fear that by appointing a so-called "AI doomer," NIST may be risking encouraging non-scientific thinking that many critics view as sheer speculation. submitted by /u/bregav [link] [comments]

- [D] Is Risk Aversion Crushing the Adoption of Cloud Abstractions?by /u/Ok_Post_149 (Machine Learning) on April 17, 2024 at 9:47 pm

Hey All, I think many of us can agree that defining the hardware we want to use right next to the piece of code we are running is objectively a much better developer experience. I have always loved the idea of lowering the barrier when it comes to running code in the cloud. As more cloud abstractions hit the market, I was honestly really surprised by the lack of adoption. There aren't any unicorns (I don't think any actually) in this space yet, just series A businesses. After speaking with a handful of Data Scientists, Machine Learning Engineers, and DevOps Engineers, it started to dawn on me that risk aversion is causing most of the friction. Using a fully managed service can definitely have some upsides, and in many cases, I prefer using them, but convincing your boss to pipe petabytes of data to another company's cloud and incur 3-5x compute costs probably isn't going to sit well. There are also some open source alternatives but they are intentionally difficult to configure so you pay for their premium offerings that reduce config setup. Would love to hear everyone's thoughts, especially those who work at lean startups and global 5,000 companies. submitted by /u/Ok_Post_149 [link] [comments]

- [D] Is there a way to determine if the representations a model learns are spherical or hyperbolic?by /u/Mad_Scientist2027 (Machine Learning) on April 17, 2024 at 8:49 pm

Title. Is there a way to determine the degree of sphericity or hyperbolicity of the embeddings a feature extractor learns for a set of examples it has been trained on / will be tested on? I am new to geometry in deep learning. It would be amazing if anyone could also point me to a paper or a book to get started on this. Thanks in advance. submitted by /u/Mad_Scientist2027 [link] [comments]

- [R] RuleOpt: Optimization-Based Rule Learning for Classificationby /u/zedeleyici3401 (Machine Learning) on April 17, 2024 at 7:34 pm

Paper: https://arxiv.org/abs/2104.10751 Package: https://github.com/sametcopur/ruleopt Documentation: https://ruleopt.readthedocs.io/ RuleOpt is an optimization-based rule learning algorithm designed for classification problems. Focusing on scalability and interpretability, RuleOpt utilizes linear programming for rule generation and extraction. The Python library ruleopt is capable of extracting rules from ensemble models, and it also implements a novel rule generation scheme. The library ensures compatibility with existing machine learning pipelines, and it is especially efficient for tackling large-scale problems. Here are a few highlights of ruleopt: Efficient Rule Generation and Extraction: Leverages linear programming for scalable rule generation (stand-alone machine learning method) and rule extraction from trained random forest and boosting models. Interpretability: Prioritizes model transparency by assigning costs to rules in order to achieve a desirable balance with accuracy. Integration with Machine Learning Libraries: Facilitates smooth integration with well-known Python libraries scikit-learn, LightGBM, and XGBoost, and existing machine learning pipelines. Extensive Solver Support: Supports a wide array of solvers, including Gurobi, CPLEX and OR-Tools. submitted by /u/zedeleyici3401 [link] [comments]

- [D] LSTM Time Series Forecastingby /u/StressAccomplished26 (Machine Learning) on April 17, 2024 at 7:15 pm

I've been using LSTM models for time series forecasting and have noticed they perform well for predicting the immediate next step. However, when attempting multi-step predictions to forecast one week ahead (168 periods, with hourly data), the performance drops significantly. Currently, I'm using a recursive approach: feeding back the prediction as the next input (closed loop). This method isn't yielding good results, although open loop predictions are much more accurate. Is there a better technique for enhancing LSTM's multi-step prediction accuracy? Are LSTMs not useful for doing multi step forecasting? Any links or resources to articles explain multi step forecasting with LSTMs would be appreciated. https://preview.redd.it/30y3m16gr3vc1.png?width=833&format=png&auto=webp&s=6d6b29e05b105b50d2689127ea6881d1ec667903 https://preview.redd.it/a971j16gr3vc1.png?width=833&format=png&auto=webp&s=fec277d9343c5f702247a6135dbb630358c14cca submitted by /u/StressAccomplished26 [link] [comments]

- [R] ResearchAgent: Iterative Research Idea Generation over Scientific Literature with Large Language Modelsby /u/SeawaterFlows (Machine Learning) on April 17, 2024 at 5:49 pm

Paper: https://arxiv.org/abs/2404.07738 Abstract: Scientific Research, vital for improving human life, is hindered by its inherent complexity, slow pace, and the need for specialized experts. To enhance its productivity, we propose a ResearchAgent, a large language model-powered research idea writing agent, which automatically generates problems, methods, and experiment designs while iteratively refining them based on scientific literature. Specifically, starting with a core paper as the primary focus to generate ideas, our ResearchAgent is augmented not only with relevant publications through connecting information over an academic graph but also entities retrieved from an entity-centric knowledge store based on their underlying concepts, mined and shared across numerous papers. In addition, mirroring the human approach to iteratively improving ideas with peer discussions, we leverage multiple ReviewingAgents that provide reviews and feedback iteratively. Further, they are instantiated with human preference-aligned large language models whose criteria for evaluation are derived from actual human judgments. We experimentally validate our ResearchAgent on scientific publications across multiple disciplines, showcasing its effectiveness in generating novel, clear, and valid research ideas based on human and model-based evaluation results. submitted by /u/SeawaterFlows [link] [comments]

- [R] Ctrl-Adapter: An Efficient and Versatile Framework for Adapting Diverse Controls to Any Diffusion Modelby /u/SeawaterFlows (Machine Learning) on April 17, 2024 at 5:34 pm

Paper: https://arxiv.org/abs/2404.09967 Code: https://github.com/HL-hanlin/Ctrl-Adapter Models: https://huggingface.co/hanlincs/Ctrl-Adapter Project page: https://ctrl-adapter.github.io/ Abstract: ControlNets are widely used for adding spatial control in image generation with different conditions, such as depth maps, canny edges, and human poses. However, there are several challenges when leveraging the pretrained image ControlNets for controlled video generation. First, pretrained ControlNet cannot be directly plugged into new backbone models due to the mismatch of feature spaces, and the cost of training ControlNets for new backbones is a big burden. Second, ControlNet features for different frames might not effectively handle the temporal consistency. To address these challenges, we introduce Ctrl-Adapter, an efficient and versatile framework that adds diverse controls to any image/video diffusion models, by adapting pretrained ControlNets (and improving temporal alignment for videos). Ctrl-Adapter provides diverse capabilities including image control, video control, video control with sparse frames, multi-condition control, compatibility with different backbones, adaptation to unseen control conditions, and video editing. In Ctrl-Adapter, we train adapter layers that fuse pretrained ControlNet features to different image/video diffusion models, while keeping the parameters of the ControlNets and the diffusion models frozen. Ctrl-Adapter consists of temporal and spatial modules so that it can effectively handle the temporal consistency of videos. We also propose latent skipping and inverse timestep sampling for robust adaptation and sparse control. Moreover, Ctrl-Adapter enables control from multiple conditions by simply taking the (weighted) average of ControlNet outputs. With diverse image/video diffusion backbones (SDXL, Hotshot-XL, I2VGen-XL, and SVD), Ctrl-Adapter matches ControlNet for image control and outperforms all baselines for video control (achieving the SOTA accuracy on the DAVIS 2017 dataset) with significantly lower computational costs (less than 10 GPU hours). submitted by /u/SeawaterFlows [link] [comments]

- [D] Question: Time-series decoding to non-temporal latent space?by /u/reesespike (Machine Learning) on April 17, 2024 at 5:08 pm

Hello! I am a researcher in computational neuroscience, looking to apply some contemporary machine learning techniques to fMRI timeseries data. I have a collection of highly dimensional 4D fMRI timeseries data collected while subjects were observing naturalistic images from COCO at regular intervals. We currently have decoding models that take preprocessed "snapshots" of this timeseries data flattened into an activation pattern that is aggregated over the short period the image was being observed, and use some machine learning models to decode and reconstruct the image content from the brain. (See some of my recent work). I am curious what sort of machine learning techniques exist that might be able to address the time-series data itself, without having to collapse the timeseries to a single snapshot to perform our decoding process. What I am envisioning is a model (perhaps a transformer) that can take as input a highly dimensional multichannel timeseries and output a flattened latent representation (say, a CLIP vector) corresponding to an image stimulus, or even a series of latent vectors separated by a known regular interval (as we have in our data for the different image presentations). To my knowledge most of the work in machine learning with time series data is in forecasting, but what I want is a static (or potentially repetitive) output. My hope is that the more detailed timeseries data will have additional signal that will boost decoding performance for fMRI vision decoding. Is there any existing work in the field of ML that has tackled a similar problem? submitted by /u/reesespike [link] [comments]

- Uncover hidden connections in unstructured financial data with Amazon Bedrock and Amazon Neptuneby Xan Huang (AWS Machine Learning Blog) on April 17, 2024 at 3:00 pm

In asset management, portfolio managers need to closely monitor companies in their investment universe to identify risks and opportunities, and guide investment decisions. Tracking direct events like earnings reports or credit downgrades is straightforward—you can set up alerts to notify managers of news containing company names. However, detecting second and third-order impacts arising from events

- Open source observability for AWS Inferentia nodes within Amazon EKS clustersby Riccardo Freschi (AWS Machine Learning Blog) on April 17, 2024 at 2:54 pm

This post walks you through the Open Source Observability pattern for AWS Inferentia, which shows you how to monitor the performance of ML chips, used in an Amazon Elastic Kubernetes Service (Amazon EKS) cluster, with data plane nodes based on Amazon Elastic Compute Cloud (Amazon EC2) instances of type Inf1 and Inf2.

- [D] In cross-attention, why is Q taken from decoder, and K taken from the encoders output respectively?by /u/shuvamg007 (Machine Learning) on April 17, 2024 at 2:49 pm

I looked up in so many places but couldn't find an answer. What happens if we switch Q and K to be from the encoder and decoder respectively? Would it make any difference? submitted by /u/shuvamg007 [link] [comments]

- [D] How does visual embedding coexist with language embedding space in Vision Language Model?by /u/E-fazz (Machine Learning) on April 17, 2024 at 2:41 pm

Hello everyone! I'm excited to discuss about Large Vision Language Model (LVLM). Since we're probably the biggest community into LLMs, I thought this channel would be the perfect place to start this conversation. Also, there isn't much out there on combining vision and language embeddings. A little background on LVLMs: They typically consist of a vision encoder for images, a regular tokenizer for text, a projection layer like an MLP to align vision features with text embedding spaces, and finally, merging both image and text embeddings for sending into the LLM model. The input includes both text and images, while the output is text, making it a multimodal LLM. Check out this diagram from the LLaVA paper for a visual breakdown: https://preview.redd.it/l222askgu1vc1.png?width=1607&format=png&auto=webp&s=ef011e16301c22b4751d8d0a8f3698f70e3ffd26 Starting with a vision encoder like CLIP ViT, the model learns visual information from images, then uses an MLP to project this onto the LLM's embedding space. The paper calls this feature alignment. I'm curious about how vision embeddings interact with text embeddings, so I experimented by visualizing them in 3D with PCA. For instance, take the llava-7B model—it uses the llama-7B backend with a 32k vocabulary size and 4096 dimensions, making the embedding size: [32000,4096]. I used a simple prompt, "Explain this image to me," with a picture of a cat to see how the embeddings appear in our space. https://preview.redd.it/032oy0ynu1vc1.png?width=662&format=png&auto=webp&s=d037bbecc976392e159a1c1bde775ef1e148488d Adding visual tokens changes the dynamics. Each image transforms into 576 vision tokens of shape [576,4096]. Check out how the plot adjusts when these tokens are included: https://preview.redd.it/9c3cu7ksu1vc1.png?width=660&format=png&auto=webp&s=c0aab6782fc309eba09ec660759bfaf48582dc14 The entire text embedding seems smaller, represented by tiny blue dots containing the entire llama-7B vocabulary. To zoom in further, I highlighted only the visual tokens near the embedding (meaning higher cosine similarity). Here’s how they cluster together: https://preview.redd.it/vdeacylwu1vc1.png?width=566&format=png&auto=webp&s=42441b4fd515cee916b40243429b4aa6820b998c So what do I think? First, we aren't directly converting visual tokens into text. A recent Google paper tried and found it wasn't the best approach. It seems that visual reasoning hovers close to text embedding spaces, likely because images are denser in information, requiring more tokens to represent visual concepts. Secondly, this setup seems right for now. Visual tokens, in context with text tokens, add image-derived context to the LLM, enabling it to 'see' an image. Lastly, even though llava is performing well on some benchmarks in visual reasoning, it might not be the most efficient at image representation yet. Some recent studies talked about it's sparse attention phenomenon, especially with visual tokens in LVLMs. We are just lucky because the attention algorithm attends to only meaningful visual tokens and ignores the noises. What do you think? Thanks for reading. 🙂 submitted by /u/E-fazz [link] [comments]

- Good Resources on Time Series Forecasting? [D]by /u/secret_fyre (Machine Learning) on April 17, 2024 at 2:26 pm

Can anyone recommend any good resources on modern time series forecasting with machine learning? I found one book on time series forecasting on Amazon with great reviews called Time Series Forecasting in Python. Having said that, a lot of machine learning books and resources seem to gloss over time series. What are some good resources (either entire books, or chapters in books) that cover time series? submitted by /u/secret_fyre [link] [comments]

- [D] Best NLP encoders (BERT...) for NER with very low data finetuning ?by /u/LelouchZer12 (Machine Learning) on April 17, 2024 at 1:40 pm

Hi I am aware that a lot of transformer encoder variations exist (BERT, DistilBERT, Deberta, Roberta ...). However I am not interested in the best ones (that should probably be Deberta V3) but rather the ones that can quickly have decent results even with very few example examples (like ~50,100 sentences each containing maybe 1, 2 or 3 entities). I have done a few experiments in english, and to my surprise it seems that the one that perform best with as few data as possible is the original english BERT model (google-bert/bert-base-uncased on HF), and not one of the more recent variations. I have also done other experiments in french, and the multilingual BERT also quickly get decent results faster than models specially trained on french data (e.g CamemBERT). The models I've compared include : bert, bert multilingual, distilbert, distilbert multilingual, roberta, xlm-roberta, camembert, camemberta, distilroberta, debertav3, debertav3 multilingual What are your thought about this ? Is it something surprising or unusual ? Any advice ? submitted by /u/LelouchZer12 [link] [comments]

- Word embedding - contextualised vs word2vec [D]by /u/datashri (Machine Learning) on April 17, 2024 at 1:03 pm

Noob question about word embeddings - As far as I understand so far - Contextualized word embeddings generated by BERT and other LLM type models use the attention mechanism and take into account the context of the word. So the same word in different sentences can have different vectors. This ^ is opposed to the older approach of models like word2vec - embeddings generated by word2vec are not contexual. However, looking closely at the CBOW and skip-gram models. it seems that they too try to predict the central word based on the surrounding (context) words. So the embeddings generated by word2vec can also be contextual. So they're both contexutal? What am I missing? submitted by /u/datashri [link] [comments]

- [Discussion]ACM MM2024by /u/INeedPapers_TTT (Machine Learning) on April 17, 2024 at 10:35 am

This is the first year (if I remember correclty) that MM shifts from CMT to Openreview. As an author I've been sensing something wrong since I created my submission, i.e. desk rejection even before abstract ddl, inconsistency about whether to include submission number within the paper, etc. Now I've heard a lot from social media that many authors without many/any publications (yes including me) have been nominated as reviewers due to their lack of reviewers for the submission volume. I'm very concerned about the quality of the reviews and the submission in MM2024 this year. submitted by /u/INeedPapers_TTT [link] [comments]

- [D] What comes first, math, or algorithm in research?by /u/Deep-Station-1746 (Machine Learning) on April 17, 2024 at 8:22 am

I'm learning meths behind diffusion right now (DDPM, Score-based, and other approaches). I'm wondering how exactly did researchers come up with the idea? Does inventing new approaches go something like this? 1. We want to make better image generator. 2. Oh, the data will never be enough... 3. Let's multiply data - by adding some noise corruption 4. This this works well, what if we make a denoising network? 5. What if we make network that makes an image from pure noise? 6. That doesn't work, what if we did smaller denoising steps? 7. This works! Now, let's create some theory on why it works. 8. Write the paper Or something like this? 1. We want to make better image generator. 2. We know "nonequilibrium thermodynamics" really well and want to try applying it somehow 3. We somehow come up with an algorithm that relies on math from that theory 4. It works! 5. We write the paper. Which comes first usually? Math or Algorithm? submitted by /u/Deep-Station-1746 [link] [comments]

- The future of AI/ML data centers is going to be 100's, even 1000's of servers running like one giant accelerator [D]by /u/Low_Complaint2254 (Machine Learning) on April 17, 2024 at 6:16 am

Saw this informative video on the server company Gigabyte's website (https://youtu.be/2Q7S-CbnAAY?si=DJtU2mQ_ZKRZ83Nf), the short version is that server brands are now shipping complete clusters of servers to data centers instead of individual machines. In the example shown here, it's 8 racks (plus one extra for management and networking), with 4 servers of the same model in each rack, and with 4 super-advanced GPUs of the same model in each server. To do the math for you, that's 32 servers or 256 GPU accelerators per cluster. Take note that all the servers and GPUs have to be the same model because they are connected in a way that they basically operate as one individual machine. The reason this is very likely to be the standard building block in all AI data centers is that the way we are training AI off of large datasets right now, the parameters are numbering in the billions, even the trillions. This is especially true for LLMs that brought us ChatGPT and its ilk. The only way to handle these trillions of parameters with any efficiency is through parallel computing on a scale we've never seen before. Hence this bold new concept of connecting hundreds, even thousands of servers together so they are basically one giant server that's loaded thousands of GPUs by Nvidia or other brands. Truly fascinating stuff and I've not seen anything else on this scale that's currently being proposed for the future of AI computing. Here's the website of the cluster introduced in the video: https://www.gigabyte.com/Industry-Solutions/giga-pod-as-a-service?lan=en submitted by /u/Low_Complaint2254 [link] [comments]

- [R] The Illusion of State in State-Space Modelsby /u/hardmaru (Machine Learning) on April 17, 2024 at 2:58 am

submitted by /u/hardmaru [link] [comments]

- Explore data with ease: Use SQL and Text-to-SQL in Amazon SageMaker Studio JupyterLab notebooksby Pranav Murthy (AWS Machine Learning Blog) on April 16, 2024 at 11:00 pm

Amazon SageMaker Studio provides a fully managed solution for data scientists to interactively build, train, and deploy machine learning (ML) models. In the process of working on their ML tasks, data scientists typically start their workflow by discovering relevant data sources and connecting to them. They then use SQL to explore, analyze, visualize, and integrate

- Distributed training and efficient scaling with the Amazon SageMaker Model Parallel and Data Parallel Librariesby Xinle Sheila Liu (AWS Machine Learning Blog) on April 16, 2024 at 4:18 pm

In this post, we explore the performance benefits of Amazon SageMaker (including SMP and SMDDP), and how you can use the library to train large models efficiently on SageMaker. We demonstrate the performance of SageMaker with benchmarks on ml.p4d.24xlarge clusters up to 128 instances, and FSDP mixed precision with bfloat16 for the Llama 2 model.

- Manage your Amazon Lex bot via AWS CloudFormation templatesby Thomas Rindfuss (AWS Machine Learning Blog) on April 16, 2024 at 4:11 pm

Amazon Lex is a fully managed artificial intelligence (AI) service with advanced natural language models to design, build, test, and deploy conversational interfaces in applications. It employs advanced deep learning technologies to understand user input, enabling developers to create chatbots, virtual assistants, and other applications that can interact with users in natural language. Managing your

- A secure approach to generative AI with AWSby Anthony Liguori (AWS Machine Learning Blog) on April 16, 2024 at 4:00 pm

Generative artificial intelligence (AI) is transforming the customer experience in industries across the globe. Customers are building generative AI applications using large language models (LLMs) and other foundation models (FMs), which enhance customer experiences, transform operations, improve employee productivity, and create new revenue channels. The biggest concern we hear from customers as they explore the advantages of generative AI is how to protect their highly sensitive data and investments. At AWS, our top priority is safeguarding the security and confidentiality of our customers' workloads. We think about security across the three layers of our generative AI stack ...

- Cost-effective document classification using the Amazon Titan Multimodal Embeddings Modelby Sumit Bhati (AWS Machine Learning Blog) on April 11, 2024 at 7:21 pm

Organizations across industries want to categorize and extract insights from high volumes of documents of different formats. Manually processing these documents to classify and extract information remains expensive, error prone, and difficult to scale. Advances in generative artificial intelligence (AI) have given rise to intelligent document processing (IDP) solutions that can automate the document classification,

- AWS at NVIDIA GTC 2024: Accelerate innovation with generative AI on AWSby Julie Tang (AWS Machine Learning Blog) on April 11, 2024 at 4:14 pm

AWS was delighted to present to and connect with over 18,000 in-person and 267,000 virtual attendees at NVIDIA GTC, a global artificial intelligence (AI) conference that took place March 2024 in San Jose, California, returning to a hybrid, in-person experience for the first time since 2019. AWS has had a long-standing collaboration with NVIDIA for

- Build an active learning pipeline for automatic annotation of images with AWS servicesby Yanxiang Yu (AWS Machine Learning Blog) on April 10, 2024 at 4:26 pm

This blog post is co-written with Caroline Chung from Veoneer. Veoneer is a global automotive electronics company and a world leader in automotive electronic safety systems. They offer best-in-class restraint control systems and have delivered over 1 billion electronic control units and crash sensors to car manufacturers globally. The company continues to build on a

- Knowledge Bases for Amazon Bedrock now supports custom prompts for the RetrieveAndGenerate API and configuration of the maximum number of retrieved resultsby Sandeep Singh (AWS Machine Learning Blog) on April 9, 2024 at 7:01 pm

With Knowledge Bases for Amazon Bedrock, you can securely connect foundation models (FMs) in Amazon Bedrock to your company data for Retrieval Augmented Generation (RAG). Access to additional data helps the model generate more relevant, context-specific, and accurate responses without retraining the FMs. In this post, we discuss two new features of Knowledge Bases for

- Knowledge Bases for Amazon Bedrock now supports metadata filtering to improve retrieval accuracyby Corvus Lee (AWS Machine Learning Blog) on April 8, 2024 at 7:23 pm

At AWS re:Invent 2023, we announced the general availability of Knowledge Bases for Amazon Bedrock. With Knowledge Bases for Amazon Bedrock, you can securely connect foundation models (FMs) in Amazon Bedrock to your company data using a fully managed Retrieval Augmented Generation (RAG) model. For RAG-based applications, the accuracy of the generated responses from FMs

- Build knowledge-powered conversational applications using LlamaIndex and Llama 2-Chatby Romina Sharifpour (AWS Machine Learning Blog) on April 8, 2024 at 5:03 pm

Unlocking accurate and insightful answers from vast amounts of text is an exciting capability enabled by large language models (LLMs). When building LLM applications, it is often necessary to connect and query external data sources to provide relevant context to the model. One popular approach is using Retrieval Augmented Generation (RAG) to create Q&A systems

- Use everyday language to search and retrieve data with Mixtral 8x7B on Amazon SageMaker JumpStartby Jose Navarro (AWS Machine Learning Blog) on April 8, 2024 at 4:53 pm

With the widespread adoption of generative artificial intelligence (AI) solutions, organizations are trying to use these technologies to make their teams more productive. One exciting use case is enabling natural language interactions with relational databases. Rather than writing complex SQL queries, you can describe in plain language what data you want to retrieve or manipulate.

- Boost inference performance for Mixtral and Llama 2 models with new Amazon SageMaker containersby Joao Moura (AWS Machine Learning Blog) on April 8, 2024 at 4:50 pm

In January 2024, Amazon SageMaker launched a new version (0.26.0) of Large Model Inference (LMI) Deep Learning Containers (DLCs). This version offers support for new models (including Mixture of Experts), performance and usability improvements across inference backends, as well as new generation details for increased control and prediction explainability (such as reason for generation completion

- [D] Simple Questions Threadby /u/AutoModerator (Machine Learning) on April 7, 2024 at 3:00 pm

Please post your questions here instead of creating a new thread. Encourage others who create new posts for questions to post here instead! Thread will stay alive until next one so keep posting after the date in the title. Thanks to everyone for answering questions in the previous thread! submitted by /u/AutoModerator [link] [comments]

- Improving Content Moderation with Amazon Rekognition Bulk Analysis and Custom Moderationby Mehdy Haghy (AWS Machine Learning Blog) on April 5, 2024 at 6:16 pm

Amazon Rekognition makes it easy to add image and video analysis to your applications. It’s based on the same proven, highly scalable, deep learning technology developed by Amazon’s computer vision scientists to analyze billions of images and videos daily. It requires no machine learning (ML) expertise to use and we’re continually adding new computer vision

- Understanding and predicting urban heat islands at Gramener using Amazon SageMaker geospatial capabilitiesby Abhishek Mittal (AWS Machine Learning Blog) on April 5, 2024 at 4:41 pm

This is a guest post co-authored by Shravan Kumar and Avirat S from Gramener. Gramener, a Straive company, contributes to sustainable development by focusing on agriculture, forestry, water management, and renewable energy. By providing authorities with the tools and insights they need to make informed decisions about environmental and social impact, Gramener is playing a

- Build a news recommender application with Amazon Personalizeby Bala Krishnamoorthy (AWS Machine Learning Blog) on April 4, 2024 at 5:01 pm

With a multitude of articles, videos, audio recordings, and other media created daily across news media companies, readers of all types—individual consumers, corporate subscribers, and more—often find it difficult to find news content that is most relevant to them. Delivering personalized news and experiences to readers can help solve this problem, and create more engaging

- Nielsen Sports sees 75% cost reduction in video analysis with Amazon SageMaker multi-model endpointsby Eitan Sela (AWS Machine Learning Blog) on April 4, 2024 at 4:46 pm

This is a guest post co-written with Tamir Rubinsky and Aviad Aranias from Nielsen Sports. Nielsen Sports shapes the world’s media and content as a global leader in audience insights, data, and analytics. Through our understanding of people and their behaviors across all channels and platforms, we empower our clients with independent and actionable intelligence

- Seamlessly transition between no-code and code-first machine learning with Amazon SageMaker Canvas and Amazon SageMaker Studioby Rajakumar Sampathkumar (AWS Machine Learning Blog) on April 3, 2024 at 5:53 pm

Amazon SageMaker Studio is a web-based, integrated development environment (IDE) for machine learning (ML) that lets you build, train, debug, deploy, and monitor your ML models. SageMaker Studio provides all the tools you need to take your models from data preparation to experimentation to production while boosting your productivity. Amazon SageMaker Canvas is a powerful

- Build a contextual text and image search engine for product recommendations using Amazon Bedrock and Amazon OpenSearch Serverlessby Sandeep Singh (AWS Machine Learning Blog) on April 3, 2024 at 3:35 pm

In this post, we show how to build a contextual text and image search engine for product recommendations using the Amazon Titan Multimodal Embeddings model, available in Amazon Bedrock, with Amazon OpenSearch Serverless.

Download AWS machine Learning Specialty Exam Prep App on iOs

Download AWS Machine Learning Specialty Exam Prep App on Android/Web/Amazon

A Twitter List by enoumenDownload AWS machine Learning Specialty Exam Prep App on iOs

Download AWS Machine Learning Specialty Exam Prep App on Android/Web/Amazon