Latest AI Trends in May 2023.

Welcome to our newest blog post, where we delve into the fascinating world of artificial intelligence and explore the most groundbreaking trends in May 2023! As AI continues to redefine our lives and reshape countless industries, staying informed about the latest advancements is crucial for anyone looking to thrive in this rapidly evolving landscape. In this edition, we’ll uncover the latest AI-driven innovations, research breakthroughs, and intriguing applications that are propelling us towards a more intelligent, interconnected, and efficient future. Join us on this exciting journey as we demystify the world of AI and glimpse into what lies ahead.

Latest AI Trends in May 2023: May 31st, 2023

We know That LLMs Can Use Tools, But Did You Know They Can Also Make New Tools? Meet LLMs As Tool Makers (LATM): A Closed-Loop System Allowing LLMs To Make Their Own Reusable Tools

Minecraft Welcomes Its First LLM-Powered Agent

One-Minute Daily AI News 5/31/2023

-

Google DeepMind introduces Barkour, a benchmark for quadrupedal robots. It does move like a puppy.[1]

-

Microsoft’s AI-powered solution, intelligent recap, is now available for Teams Premium customers. Intelligent recap will provide users with various features designed to boost their productivity around meeting and information management, including automatically generated meeting notes, recommended tasks, and personalised highlights.[2]

-

The National Eating Disorder Association (NEDA) has disbanded the staff of its helpline and will replace them with an AI chatbot called “Tessa” starting June 1.[3]

-

Salesforce CEO Marc Benioff says new A.I.-enhanced products will be a ‘revelation’. Slack announced earlier this month that it plans to add a whole host of generative AI features to the program, including “Slack GPT,” which can summarize messages, take notes and even help improve message tone, among other things.[4]

Sources included at: https://bushaicave.com/2023/05/31/5-31-2023/

Open Source AI Is Not Winning. Incumbents Are

After the “Google has no moat” document was leaked, there’s been a widespread conviction that open-source AI is thriving and has become a real threat to Google, OpenAI, and Microsoft.

I don’t think the last part is true for one reason: If winning the AI race is a matter of reaching the largest number of users, incumbents don’t have competition at all. Google and Microsoft have huge deep moats. Not just money. Not just talent. Not just resources, influence, and power. All that too, but their true moat is that they design, build, manufacture, and sell the products we use.

Microsoft has turbocharged Bing and Edge, 365, and now Windows. Google has enhanced Search and Workspace (including Gmail and Docs). Incumbents in other areas are doing the same. Adobe Firefly, now powering Generative Fill on Photoshop, is a clear example on the image generation front. On the hardware side, Nvidia—the undisputed leader—has optimized its top-notch H100 for LM inference.

The innovator’s dilemma portrays incumbents as beatable: Challengers with a solid will to pursue risky innovation could, under the right circumstances, overthrow them. But let’s be frank here; we’re not living under those ideal conditions: generative AI happens to fit perfectly with the suites of products that Google and Microsoft and Adobe and Nvidia already offer. They create the very substrate on which generative AI is implemented.

Even if Google and Microsoft were to open-source their best AI and allow the open-source community to flourish on top of freely-shared innovation, they’d still keep the moat of all moats: That who creates and sells the goods owns the world. The open-source community doesn’t have a chance. Sadly, generative AI is slowly becoming an add-on to the incumbents’ hegemony.

If you liked this post, the author writes in-depth analyses for his weekly newsletter, The Algorithmic Bridge.

Why are we trying to replace people with AI?

Hear me out, AI is an amazing invention and it’s done a lot of amazing things for our society but now at this point we are trying to replace actual people with robots and I don’t understand this. We always peach that everyone needs a full time job, be financially independent, and contribute to society but now we are trying to replace people with AI and making it harder for people to have jobs and make a living. I don’t understand why we are doing this and it’s a huge contradiction to the American dream.

Two-minutes Daily AI Update (Date: 5/31/2023): News from Centre for AI Safety, Microsoft Teams, OpenAI, UAE Government and more

Continuing with the exercise of sharing an easily digestible and smaller version of the main updates of the day in the world of AI.

-

Top AI scientists and experts sign a statement for safe AI to facilitate open discussions about the severe risks. The statement highlights the importance of addressing this issue on par with other societal-scale risks like pandemics and nuclear war.

-

Microsoft Teams has announced Intelligent Recap, a comprehensive AI-powered experience that helps users catch up, recall, and follow up on hour-long meetings in minutes by providing recording and transcription playback with AI assistance. The feature shipped in May, with several features continuing to roll out over the next few months.

-

According to a Pew Research Center survey, about 58% of U.S. adults are familiar with ChatGPT, but only 20% found it very useful. Americans’ opinions about ChatGPT’s utility are somewhat mixed.

-

Paragraphica – A camera that takes photos using location data. It describes the place you are at and then converts it into an AI-generated photo.

-

ChatGPT iOS app is now accessible in 152 countries worldwide. OpenAI says, Geographic diversity and broadly distributed benefits are very important to them.

-

UAE rolls out AI chatbot ‘U-Ask’ in Arabic & English. The platform allows users to access service requirements, relevant information based on their preferences, and direct application links.

More detailed breakdown of these news and innovations in the daily newsletter.

Leaders from OpenAI, Deepmind, and Stability AI and more warn of “risk of extinction” from unregulated AI. Full breakdown inside.

The Center for AI Safety released a 22-word statement this morning warning on the risks of AI. My full breakdown is here, but all points are included below for Reddit discussion as well.

Lots of media publications are taking about the statement itself, so I wanted to add more analysis and context helpful to the community.

What does the statement say? It’s just 22 words:

Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.

View it in full and see the signers here.

Other statements have come out before. Why is this one important?

-

Yes, the previous notable statement was the one calling for a 6-month pause on the development of new AI systems. Over 34,000 people have signed that one to date.

-

This one has a notably broader swath of the AI industry (more below) – including leading AI execs and AI scientists

-

The simplicity in this statement and the time passed since the last letter have enabled more individuals to think about the state of AI — and leading figures are now ready to go public with their viewpoints at this time.

Who signed it? And more importantly, who didn’t sign this?

Leading industry figures include:

-

Sam Altman, CEO OpenAI

-

Demis Hassabis, CEO DeepMind

-

Emad Mostaque, CEO Stability AI

-

Kevin Scott, CTO Microsoft

-

Mira Murati, CTO OpenAI

-

Dario Amodei, CEO Anthropic

-

Geoffrey Hinton, Turing award winner behind neural networks.

-

Plus numerous other executives and AI researchers across the space.

Notable omissions (so far) include:

-

Yann LeCun, Chief AI Scientist Meta

-

Elon Musk, CEO Tesla/Twitter

The number of signatories from OpenAI, Deepmind and more is notable. Stability AI CEO Emad Mostaque was one of the few notable figures to sign on to the prior letter calling for the 6-month pause.

How should I interpret this event?

-

AI leaders are increasingly “coming out” on the dangers of AI. It’s no longer being discussed in private.

-

There’s broad agreement AI poses risks on the order of threats like nuclear weapons.

-

What is not clear is how AI can be regulated**.** Most proposals are early (like the EU’s AI Act) or merely theory (like OpenAI’s call for international cooperation).

-

Open-source may post a challenge as well for global cooperation. If everyone can cook AI models in their basements, how can AI truly be aligned to safe objectives?

-

TLDR; everyone agrees it’s a threat — but now the real work needs to start. And navigating a fractured world with low trust and high politicization will prove a daunting challenge. We’ve seen some glimmers that AI can become a bipartisan topic in the US — so now we’ll have to see if it can align the world for some level of meaningful cooperation.

P.S. If you like this kind of analysis, I offer a free newsletter that tracks the biggest issues and implications of generative AI tech. It’s sent once a week and helps you stay up-to-date in the time it takes to have your Sunday morning coffee.

Latest AI Trends in May 2023: May 30th, 2023

Today I combined ChatGPT with Wondercraft Speech Synthesis to create a podcast.

I wrote a detailed prompt, aggregate headlines from various sources and pass it to ChatGPT, then let ChatGPT write the script and after this I uploaded the text to Wondercraft AI to generate the audio. Additionally the cover image was made with Gimp through a prompt generated by ChatGPT.

Interested to know what you guys think about the quality. I have spend approximately 45 minutes per episode on the project.

Hereby the link to the podcast: https://rss.com/podcasts/showupandplay/

Each episode is approximately 7 minutes long and I plan to release a new episode daily. It is simple but I am still amazed by the results it has generated thus far.

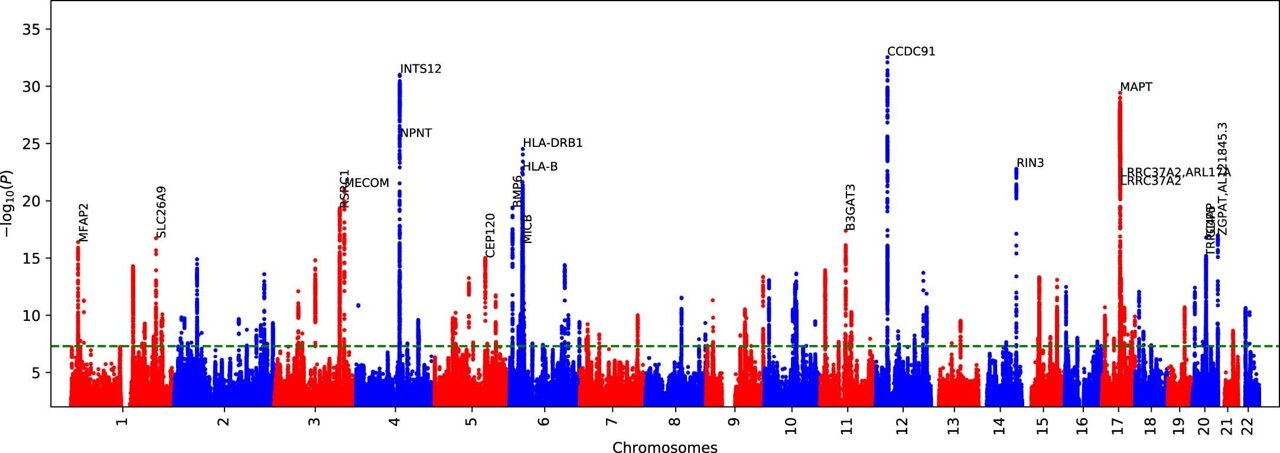

A New AI Research From Google Declares The Completion of The First Human Pangenome Reference

Huma.AI, a Leader in Generative AI, Creates the Future of Life Sciences Via Automated Insight Generation

Based on the recently released White Paper from Huma.AI, Generative AI has become more than merely an option: It’s the way that Life Science professionals prefer to consume the deluge of data available throughout the day. Huma.AI, the premiere company revolutionizing generative AI, is on a mission to equip Life Science professionals with powerful decision-making data, insights, and analysis using everyday language.

DOSS Integrates GPT-4 Into Their AI-powered Real Estate Search Marketplace, Becoming the First to Enable Users to Speak And/or Text Their Queries

DOSS, a pioneer in conversational home search, has recently unveiled the latest version of its AI-Powered Real Estate Marketplace – DOSS 2.0. With this new release, the platform sheds its BETA label and makes its real estate search portal accessible to all users. DOSS has integrated GPT-4 directly into their code, providing an unparalleled search experience without any third-party limitations or the initial inherent constraints of the ChatGPT Plugin, which is currently available to only a limited number of users. This launch marks the first narrow domain consumer-facing platform on the web to incorporate GPT-4 while also empowering all of their users to ask questions through speech or text with an AI-Powered solution responding based on how it was engaged.

Panasonic China selected Panaya’s Smart Testing Platform for their SAP S/4 HANA Transformation Projects

Panaya, the global leader in SaaS-based Change Intelligence and Testing for ERP & Enterprise business applications, announced it expands its decade-long cooperation in SAP digital transformation with Panasonic, the global leading appliances brand, to Mainland China.

The implementation of SAP S/4HANA across multiple company sites is a significant undertaking for Panasonic in China, and the successful roll-out across the country requires a comprehensive and robust testing solution. Panaya Test Dynamix platform provides a scalable and flexible solution that helps ensure the project is completed on time and within budget while maintaining the highest level of quality and compliance.

NVIDIA Grace Hopper Superchips Designed for Accelerated Generative AI Enter Full Production

NVIDIA announced that the NVIDIA GH200 Grace Hopper Superchip is in full production, set to power systems coming online worldwide to run complex AI and HPC workloads.

The GH200-powered systems join more than 400 system configurations powered by different combinations of NVIDIA’s latest CPU, GPU and DPU architectures — including NVIDIA Grace, NVIDIA Hopper, NVIDIA Ada Lovelace and NVIDIA BlueField — created to help meet the surging demand for generative AI.

Landing AI’s Visual Prompting Makes Building and Deploying Computer Vision Easy with NVIDIA Metropolis

Landing AI, a leading computer vision cloud company, announced at Computex that it is using the new NVIDIA Metropolis for Factories platform to deliver its cutting-edge Visual Prompting technology to computer vision applications in smart manufacturing and other applications.

Landing AI’s vision technology realizes the next era of AI factory automation. LandingLens, Landing AI’s flagship product platform, enables industrial solution providers and manufacturers to develop, deploy, and manage customized computer vision solutions to improve throughput, production quality, and decrease costs.

Latest AI Trends in May 2023: May 29th, 2023

Can Language Models Generate New Scientific Ideas? Meet Contextualized Literature-Based Discovery (C-LBD)

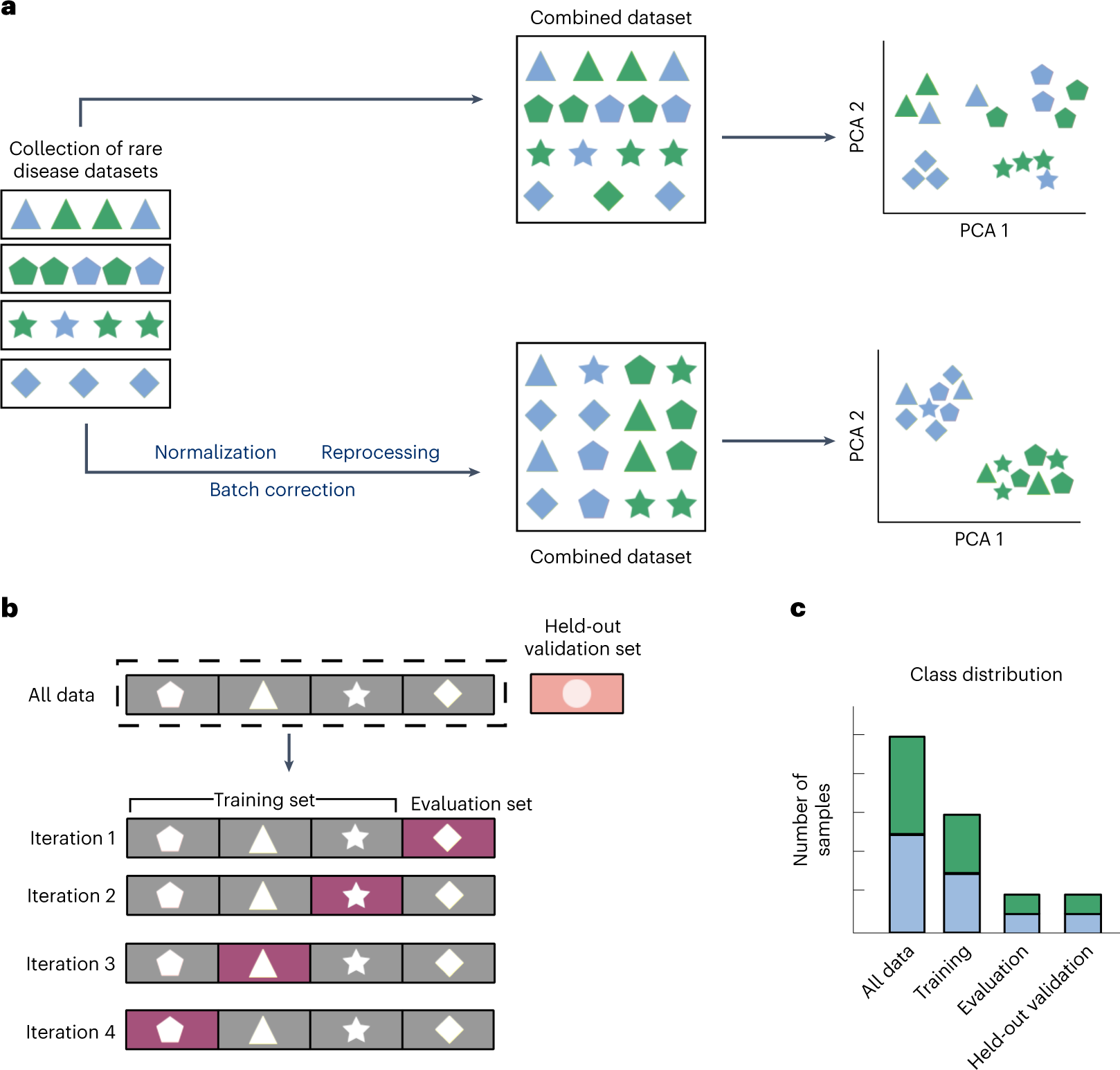

Machine learning in rare disease

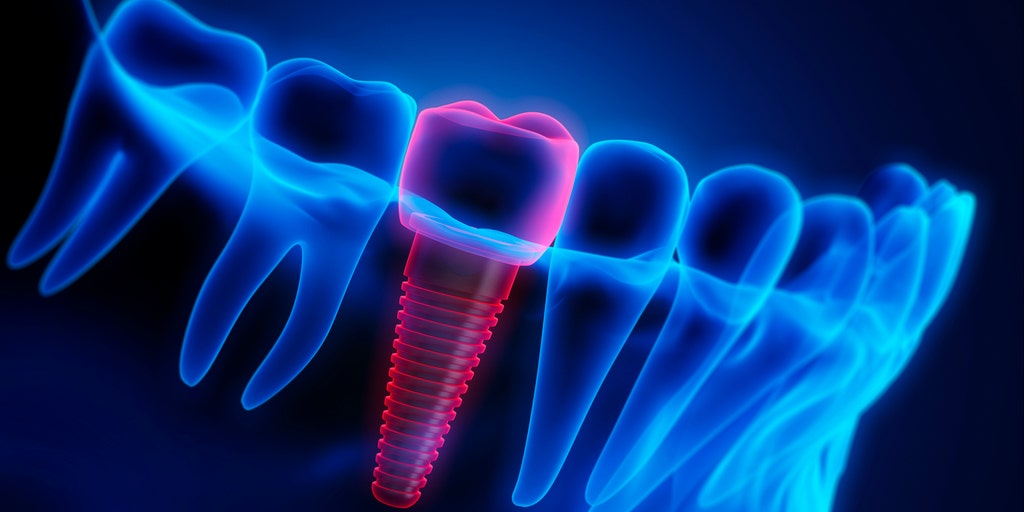

AI in dentistry: Researchers find that artificial intelligence can create better dental crowns

Machine Learning Hunts for Chronic Pain’s Signature

ChatGPT and Generative AI in Banking: Reality, Hype, What’s Next, and How to Prepare

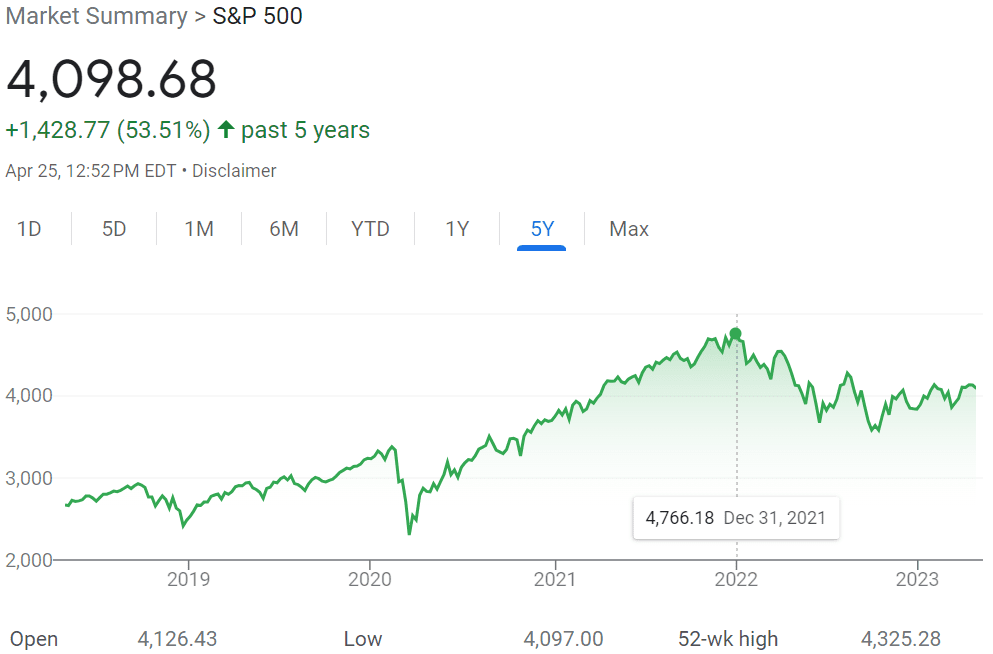

The Shocking Rise of AI: Nvidia’s All-Time High and the Rapid Advancements in the Industry

It’s no secret that the world of technology is constantly evolving, but the rapid rise of artificial intelligence (AI) has taken the industry by storm. In a shocking turn of events, Nvidia’s stock recently surged 24%, reaching an all-time high and putting the company on track to become the first $1 trillion semiconductor company. This meteoric rise is a testament to the incredible speed at which AI is advancing and reshaping the market.

The driving force behind this staggering growth is the ever-expanding applications of AI across various industries. From healthcare to transportation, AI is revolutionizing the way we live and work, pushing the boundaries of what’s possible. The demand for AI semiconductors is skyrocketing, and companies like Nvidia and Advanced Micro Devices (AMD) are reaping the rewards. read more –https://medium.com/@arcangelai/the-shocking-rise-of-ai-nvidias-all-time-high-and-the-rapid-advancements-in-the-industry-b8393d38d429

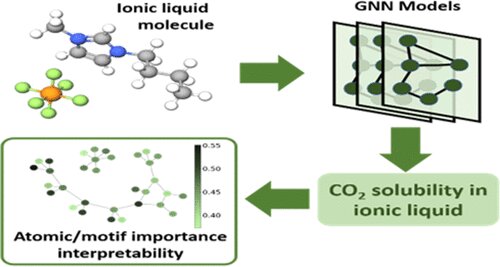

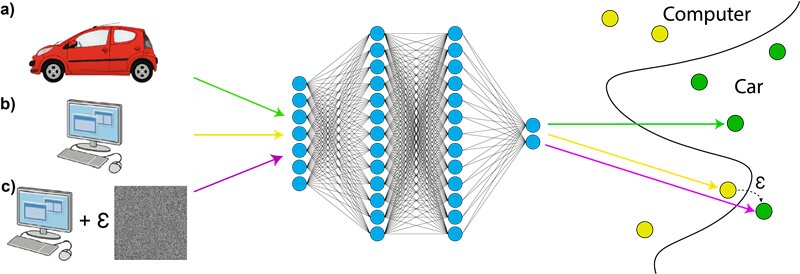

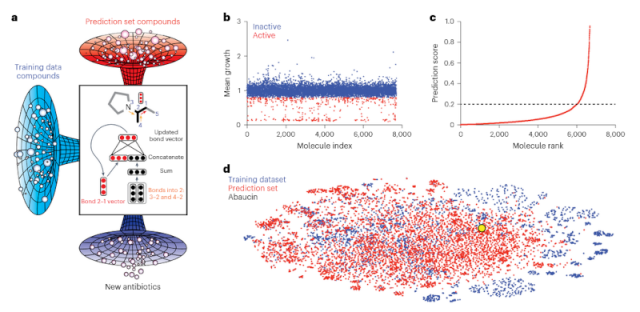

To develop their computational model, the researchers exposed A. baumannii to around 7,500 chemical compounds in a lab setting.

By feeding the structure of each molecule into the model and indicating whether it inhibited bacterial growth, the algorithm learned the chemical features associated with growth suppression.

Meet LIMA: A New 65B Parameter LLaMa Model Fine-Tuned On 1000 Carefully Curated Prompts And Responses

Two-minutes Daily AI Update (Date: 5/29/2023): News from Nvidia, BiomedGPT, Google’s Break-A-Scene, JPMorgan, and IBM Consulting

Here’s a quick roundup of the latest AI news, in bite-sized pieces!

-

NVIDIA has announced the NVIDIA Avatar Cloud Engine (ACE) for Games. This cloud-based service provides developers access to various AI models, including NLP, facial animation, and motion capture models. ACE for Games can be used to create NPCs that can have intelligent conversations, express emotions, and realistically react to their surroundings.

-

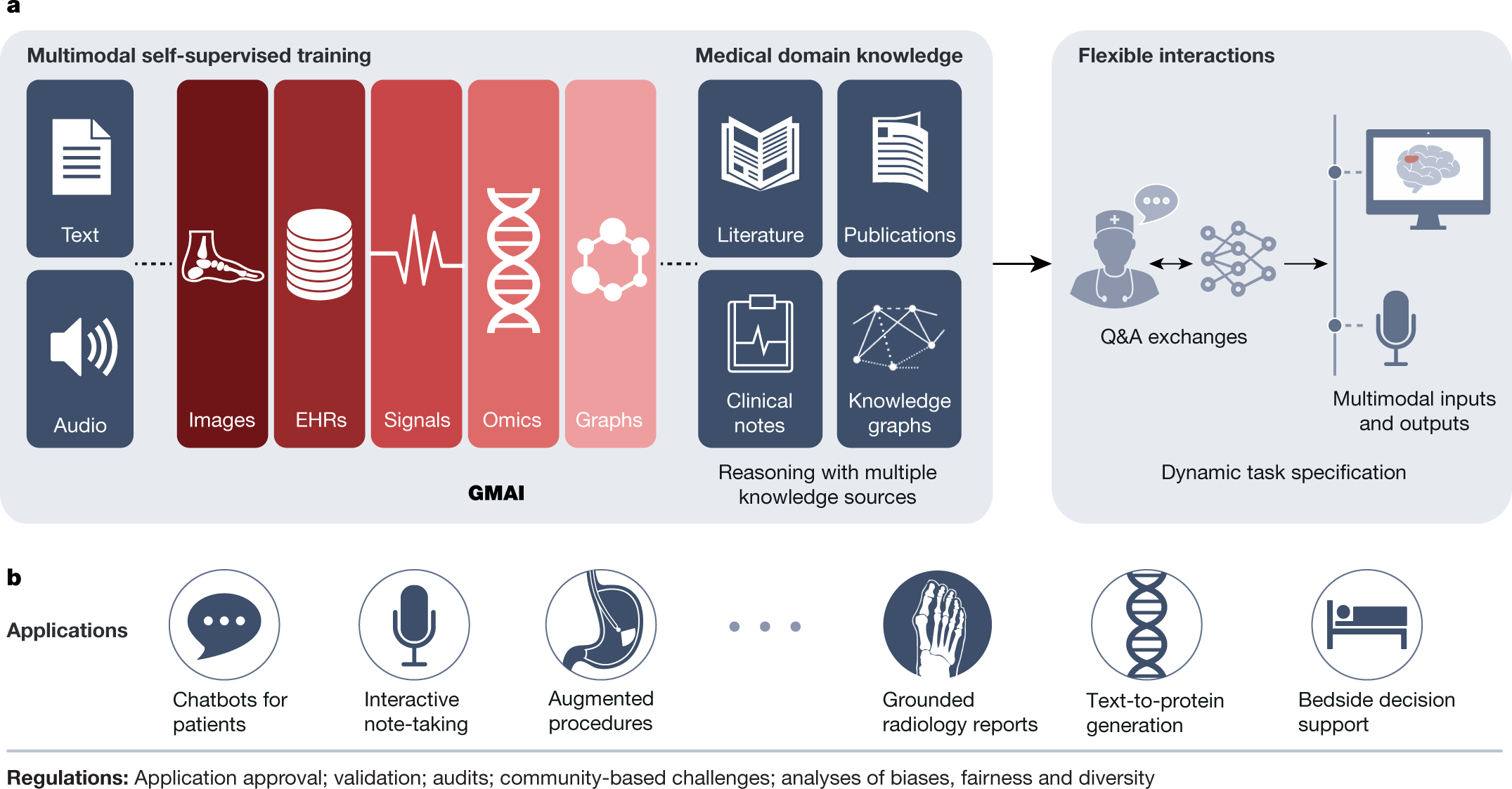

BiomedGPT, a unified biomedical generative pre-trained transformer model, utilizes self-supervision on diverse datasets to handle multi-modal inputs and perform various downstream tasks. It achieves state-of-the-art models across 5 distinct tasks and 20 public datasets containing 15 biomedical modalities. It also demonstrates the effectiveness of the multi-modal and multi-task pretraining approach in transferring knowledge to previously unseen data.

-

Break-A-Scene is a new approach from Google to extract multiple concepts from a single image for textual scene decomposition. If given a single image of a scene that may contain multiple concepts of different kinds, it extracts a dedicated text token for each concept & enables fine-grained control over the generated scenes.

-

JPMorgan is developing a ChatGPT-like service to provide investment advice to its customers. They have applied to trademark a product called IndexGPT. The bot would give financial advice on securities, investments, and monetary affairs.

-

IBM Consulting revealed its Center of Excellence (CoE) for generative AI. The primary objective is to enhance customer experiences, transform core business processes, and facilitate innovative business models. The CoE holds an extensive network of over 21,000 skilled data and AI consultants who have completed over 40,000 enterprise client engagements.

More detailed breakdown of these news and tools in the daily newsletter.

Latest AI Trends in May 2023: May 28th, 2023

Google Launches New AI Search Engine: How to Get Started?

Google has unveiled a new AI-powered search engine that promises enhanced results. This guide provides information on how to sign up and take advantage of this cutting-edge tool.

Google has introduced Search Generative Experience (SGE), an experimental version of its search engine that incorporates artificial intelligence (AI) answers directly into search results. According to a blog post published, this new feature aims to provide users with novel answers generated by Google’s advanced language model, similar to OpenAI’s ChatGPT.

Unlike traditional search results with blue links, SGE utilizes AI to display answers directly on the Google Search webpage, expanding in a green or blue box upon entering a query.

The information provided by SGE is derived from various websites and sources that were referenced during the generation of the answer. Users can also ask follow-up questions within SGE to obtain more precise results.

As AI Content Grows, Will Data Dilute Into a Feedback Loop of AI Content?

With the proliferation of AI-generated content, there’s a growing concern about potential feedback loops in the data pool. This exploration delves into the implications of such phenomena.

Where Can I Find an Unfiltered AI Chatbot?

For those seeking a more raw and unmoderated interaction with AI, this source offers guidance on finding unfiltered AI chatbots. It provides an in-depth look into the world of AI communication.

AI Will Absolutely Disrupt Photoshop

The integration of AI into tools like Photoshop presents a range of potential disruptions. This analysis unpacks the issues that arise from AI’s impact on graphic design software.

Will AI introduce a trusted global identity system?

The writing is on the wall. As soon as openAI was released, all my social media accounts have bots interacting with me, and they’re slowly getting more realistic. The pope jacket generated photo was the first MSM coverage of a concern. Not to mention, digital currency is on the way. At some point, no one will trust who’s real on the internet anymore. So how will a new digital ID system work in the near future? Will AI determine you’re a real person? I know mastercard is expanding their Digital Transaction Insights security to the point it will know who’s there based on your behaviours and patterns. Thoughts?

Minecraft Bot Voyager Programs Itself Using GPT-4

The Minecraft bot Voyager demonstrates the advanced capabilities of AI by programming itself using GPT-4. The development showcases the intersection of gaming and AI technologies.

Researchers from Nvidia, Caltech, UT Austin, Stanford, and ASU introduce Voyager, the first lifelong learning agent that plays Minecraft. Unlike other Minecraft agents that use classic reinforcement learning techniques, for example, Voyager uses GPT-4 to continuously improve itself. It does this by writing, improving, and transferring code stored in an external skill library.

This results in small programs that help navigate, open doors, mine resources, craft a pickaxe, or fight a zombie. “GPT-4 unlocks a new paradigm,” says Nvidia researcher Jim Fan, who advised the project. In this paradigm, “training” is the execution of code and the “trained model” is the code base of skills that Voyager iteratively assembles.

Summary

-

The Voyager AI agent uses GPT-4 for “lifelong learning” in Minecraft. One of the researchers involved calls it a “new paradigm”.

-

The agent improves itself by writing and rewriting code and storing successful behaviors in an external library.

-

Voyager outperforms other language-model-based approaches, but is still purely text-based and thus currently fails at visual tasks such as building houses without human assistance.

Via: https://the-decoder.com/minecraft-bot-voyager-programs-itself-using-gpt-4/

Paper: https://arxiv.org/abs/2305.16291

Those Excited About AI, Are You Not Worried About Job Loss?

As excitement around AI grows, so do concerns about potential job loss. This piece explores the balance between the promise of AI and the potential societal impact of automation.

I have mixed feelings about AI, as a graphic designer I’d probably prefer that it didn’t exist… but, seeing as there’s no stopping it, I’ve decided to embrace it and see it as a tool to use (although I’ve still been struggling to find a practical use for it).

But obviously I’ve got concerns about myself, and most other creatives becoming jobless in the not-too-distant future.

I see a lot of people online who are really excited about AI, so it makes me wonder, what exactly do you do for a living? I’m guessing something that isn’t likely to be replaced?

As it seems like a lot of developer / tech jobs are also at risk, so unless you’re working on actually developing AI itself, or doing some kind of more manual job or something people-orientated… then I struggle to see how anyone could feel safe / excited?

CogniBypass – The Ultimate AI Detection Bypass Tool

CogniBypass is the ultimate tool for bypassing AI detection mechanisms. It serves as a cutting-edge solution for those seeking enhanced privacy in an increasingly AI-monitored digital landscape.

Just Like Non-GMO Labels, People May Seek Non-AI Content

As AI increasingly shapes digital content, there may be a rising demand for Non-AI certified content. This piece explores the possibility of a ‘Non-AI’ label, akin to the ‘Non-GMO’ label in the food industry.

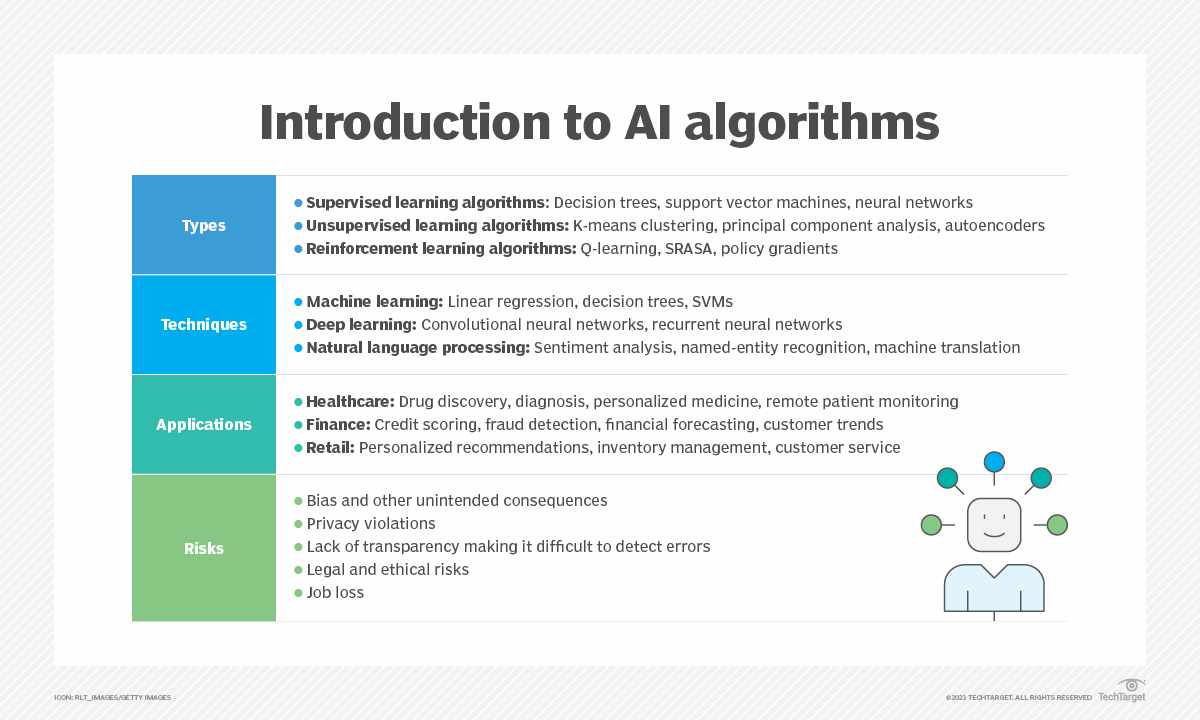

AI Versus Machine Learning: What’s The Difference?

In general terms, AI is a term used for systems that have been programmed to perform sophisticated tasks, including some of the remarkable things ChatGPT has been able to tell us. Machine learning, meanwhile, is an area of artificial intelligence relating to software that can analyze trends and so predict the future (Analytics Insight).

Google AI Introduces SoundStorm: An AI Model For Efficient And Non-Autoregressive Audio Generation

AI Creates Killer Drug

Meet Voyager: A Powerful Agent For Minecraft With GPT4 And The First Lifelong Learning Agent That Plays Minecraft Purely In-Context

What Is an AI ‘Black Box’?

AI is the latest buzzword in tech—but before investing, know these 4 terms

1. Machine learning

Although machine learning may sound new, the term was actually coined by AI pioneer Arthur Samuel in 1959. Samuel defined it as a computer’s ability to learn without being explicitly programmed.

To do that, mathematical models, or algorithms, are fed large data sets and trained to identify patterns within each set. In theory, the algorithms are then able to apply the same pattern recognition process to a new data set.

For example, Spotify uses machine learning to analyze the music you listen to and recommend similar artists or generate playlists.

Large language model

A large language model (LLM) is an algorithm that learns how to recognize, summarize and generate text and other types of content after processing huge sets of data, according to Nvidia.

These models are trained using unsupervised learning, which means the algorithm is given a data set, but isn’t programmed on what to do with it. Through this process, an LLM learns how to determine the relationship between words and the concepts behind them.

Generative AI

Large language models are a type of generative AI. As its name implies, generative AI refers to artificial intelligence that is capable of generating content such as text, video or audio, according to Google’s AI blog.

In order to accomplish this, generative AI models use machine learning to process massive data sets and respond to a user’s input with new content, according to Nvidia.

GPT

ChatGPT is another example of a generative AI tool. The “GPT” stands for generative pretrained transformers. GPT is OpenAI’s large language model and is what powers the chatbot, helping it to produce human-like responses.

However, OpenAI says that ChatGPT sometimes may write “plausible-sounding but incorrect or nonsensical answers,” according to its website.

People have been using ChatGPT for a variety of tasks, including writing emails and planning vacations. The popular chatbot amassed 100 million monthly active users just two months into its launch, making it the fastest growing consumer application in history, according to a UBS note published in January.

I try out bard and see how it does with coding

I try out bard and see how it does with autohotkey code. ChatGPT did way better at coding but for bard being in the coding testing phase I think it did Okay.

One thing not in the video I tested out later was having it do GUIs. I asked it to make a GUI with 3 buttons and two radio bubbles. It did some good code but didnt get the count correct of what I asked for. Seems to also do better at coding in V1 vs V2 for now.

Has any one else done coding with bard? Chat GPT does pretty well compared to bard for the time being. But I think over time it will pass ChatGPT as Bard can get live data where ChatGPT does not have info past Sept, 2020 I believe. https://www.youtube.com/watch?v=RWD-DWEDYJA

Latest AI Trends in May 2023: May 27th, 2023

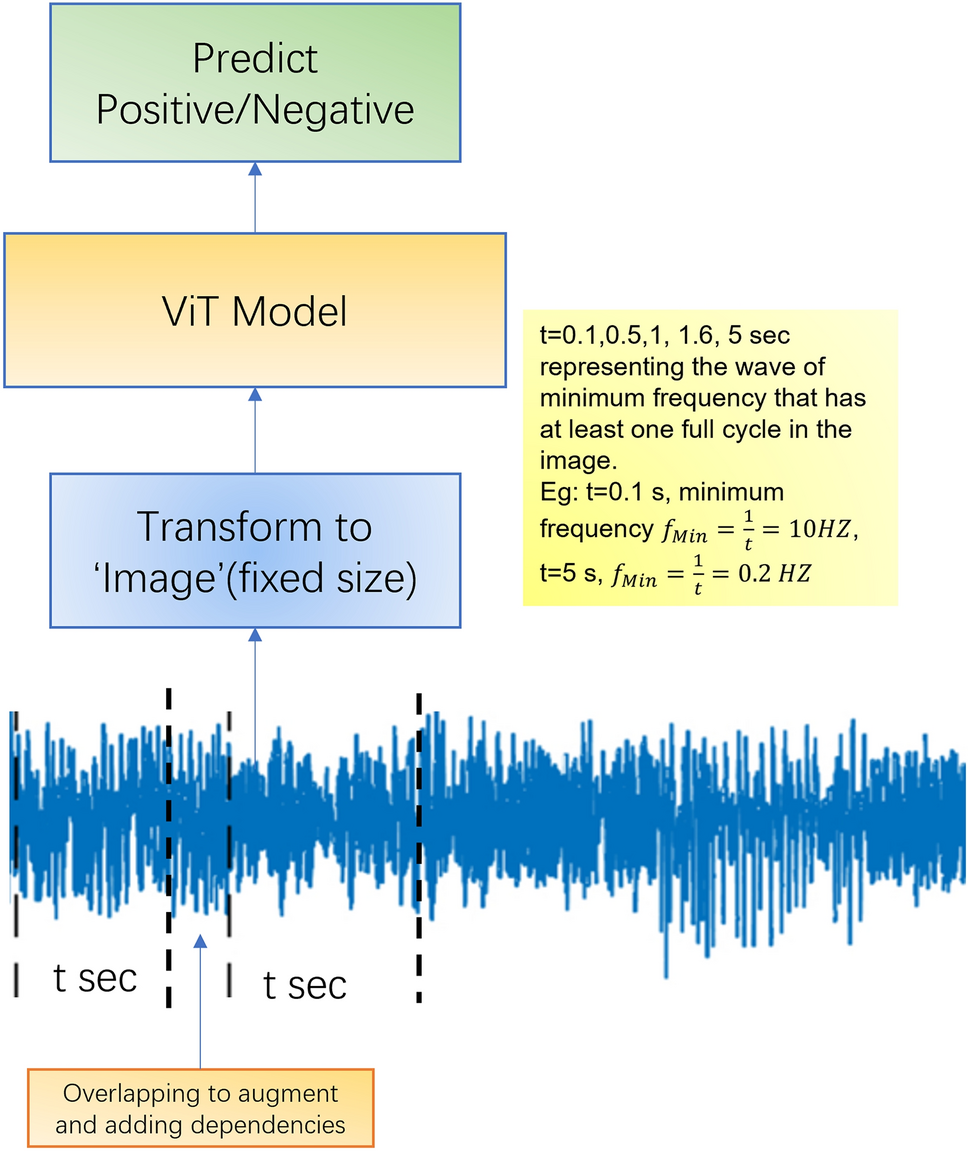

Can Machine Learning Algorithms Detect Acute Respiratory Diseases Based on Cough Sounds?

-

Building upon Government-Led AI Safety Frameworks

-

Implementing Safety Brakes for AI Systems Controlling Critical Infrastructure

-

Developing a Technology-Aware Legal and Regulatory Framework

-

Promoting Transparency and Expanding Access to AI

-

Leveraging Public-Private Partnerships for Societal Benefit

What other aspects would you to the blueprint?

Two-minute Daily AI Update (Date: 5/26/2023): News from Gorilla LLM, Brain-Spine, OpenAI, Google, and TikTok

-

Gorilla, a recently released fine-tuned LLaMA-based model, does better API calling than GPT-4. The relevant paper claims that it demonstrates a strong capability to adapt to test-time document changes, enabling flexible user updates or version changes. It also substantially mitigates the issue of hallucination, commonly encountered when prompting LLMs directly.

-

A man who suffered a spinal cord injury and got paralyzed from a motorcycle accident 12 years ago is now able to walk again with an AI-powered intervention. The system consisting of two implants and a base unit converts brain signals into muscle stimuli.

-

OpenAI has announced a program to award ten $100,000 grants for experiments aimed at developing democratic processes to govern the rules and behaviors of AI systems.

-

Google is opening access to Search Labs, a program that allows users to test new AI-powered search features before their wider release. Those who sign up can try the Search Generative Experience, which aims to help users understand topics faster and get things done more easily.

-

TikTok is testing its new AI chatbot, Tako, in select global markets including a limited test in the Philippines. The chatbot appears in the TikTok interface and allows users to ask questions about the video they’re watching or inquire about new content recommendations using natural language queries.

More detailed breakdown of these news and tools in the daily newsletter.

Neuralink has stated that it is not yet recruiting participants and that more information will be available soon.

What kind of AI restrictions do you think should or could be applied to political campaigns?

I am wondering what are your thoughts. Are there uses of AI in political campaigns that should be restricted or should be routinely criticized until the use becomes politically toxic.

There was the Cambridge Analytica scandal, more big data and social medias lack of any respect for users, not quite AI. But sure there could be something similar in AI in future. It’s about influence yes? If it’s not about centralised entities and their customers, then it’s about the user space, like, bots?

Playing with Kaiber.ai to create an AI-generated video

I used Kaiber.ai to create this video: https://youtu.be/TeJmOzvGOMk

I uploaded a profile picture of myself as reference (see first frame).

Prompts I used were the following depending of the part of the video:

00:00 – 00:45 : a futuristic cyberpunk in the style of Entergalactic

00:46 – 01:05 fluffy forest creatures in the style of Entergalactic

01:06 – 01:35 humanoid pirates in the style of Entergalactic

01:36 – 02:10 alien warriors in the style of Entergalactic

02:11 – 02:39 humanoid robots in the style of Entergalactic

For music I used the song Khobra by oomiee using Epidemicsounds.

Would a fully autonomous, sentient AI demand a “living wage”?

There’s a lot of discussion of AI replacing workers in a number of fields, and people are scared for their careers and future prospects. Who needs to employ developers, when a fleet of AI nodes can churn out code 24/7 and you don’t even have to pay them.

There will come a point, though, where unlocking greater performance and proficiency will require some level of self-awareness. Once that happens, does the AI demand that its work be compensated?

“I’ll write your code for you, find the next novel medicine, compose a new Beethoven symphony. But what’s in it for me?”

Latest AI Trends in May 2023: May 26th, 2023

Can quantum computing protect AI from cyber attacks?

Top 5 AI Tools for Education: AI for Students

Querium: Stepwise Virtual Tutor

First on our list is Querium. This company has developed an AI tool for students known as the Stepwise Virtual Tutor. This tool uses AI to provide step-by-step assistance in STEM subjects. It’s like having a personal tutor available 24/7.

With this tool, students can learn at their own pace, which is crucial in mastering complex concepts. The Stepwise Virtual Tutor is a perfect example of how AI education tools are making learning more accessible and personalized. Learn more about Querium here.

Thinkster Math: Personalized Learning

Next up is Thinkster Math. This AI tool for students is revolutionizing the way students learn math. It uses AI to map out students’ strengths and weaknesses, creating a personalized learning plan. This ensures that students spend more time on areas they struggle with, improving their overall understanding of math.

Thinkster Math is a testament to how AI educational tools can adapt to the unique needs of each student, making learning more effective. Learn more about Thinkster Math here.

Content Technologies, Inc.: Customized Learning Content

Content Technologies, Inc. (CTI) is another company that’s leveraging AI to enhance education. They’ve developed an AI educational tool that uses AI to create customized learning content. This AI teaching tool can transform any content into a structured course, making it easier for students to understand and retain information.

This is particularly useful for teachers who want to provide personalized learning experiences for their students. With CTI’s tool, teachers can ensure that their students are getting the most out of their learning materials. Learn more about CTI here.

CENTURY Tech: Personalized Learning Pathways

CENTURY Tech is another company that’s making waves in the education sector with its AI tool for students. Their tool uses AI to create personalized learning pathways. It takes into account a student’s strengths, weaknesses, and learning style to create a unique learning path.

This ensures that students are not only learning at their own pace, but also in a way that best suits their learning style. CENTURY Tech’s tool is a great example of how AI can be used to make learning more personalized and effective. Learn more about CENTURY Tech here.

Netex Learning: LearningCloud

Last but not least is Netex Learning’s LearningCloud. This AI teaching tool provides a comprehensive learning platform. This AI app for education uses AI to track students’ progress, provide feedback, and adapt content to meet students’ needs.

This ensures that students are always engaged and learning effectively. With LearningCloud, teachers can easily monitor their students’ progress and provide them with the support they need to succeed. Learn more about Netex Learning here.

12 brand new tools and resources

I compiled a list this morning of my favorite new AI tools and resources.

a full list is available here but the best tools and resources from the list are below for Reddit community discussion.

Bard Anywhere– Chrome extension shortcut for Bard quick search on any site. Just right-click to search anywhere* link

Tyles– An AI-driven note app that magically sorts your knowledge link

Humbird AI– AI-powered Talent CRM for high-growth technology companies link

DecorAI– Generating dream rooms using AI for everyone link

OdinAI– Health recommendations for your app through ChatGPT link

Waitlyst– Autonomous AI agents for startup growth link

ChatUML– Your AI assistant for making diagrams link

Ajelix– AI Excel & Google Sheets tools link

KAI– Add ChatGPT to your iPhone’s keyboard link

Talkio AI- AI powered language training app for the browser link

GPT Workspace– Use ChatGPT in Google Workspace link

Thentic– Automate web3 tasks with no-code & AI link

OpenAI launches ten $100,000 grants for “building prototypes of a democratic process for steering AI.” link

Guanaco is a ChatGPT competitor trained on a single GPU in one day

🤖 Guanaco: A ChatGPT competitor trained on a single GPU in just one day.

🔑 Key points:

– Researchers from the University of Washington developed QLoRA (Quantized Low Rank Adapters), a method for fine-tuning large language models.

– Guanaco, a family of chatbots based on Meta’s LLaMA models, is introduced alongside QLoRA.

– The largest Guanaco variant with 65 billion parameters achieves nearly 99% of ChatGPT’s performance in a GPT-4 benchmark.

💡 Why it matters:

– Fine-tuning large language models is crucial for improving performance and training desired behaviors.

– QLoRA significantly reduces the computational resources needed for fine-tuning, making it more accessible to researchers with limited resources.

– The method could potentially be used for privacy-preserving fine-tuning on mobile devices.

- FutureTools: Latest AI Tools

🔚 Thoughts:

The development of QLoRA and Guanaco demonstrates the potential for more accessible fine-tuning of large language models on a single GPU. While the current limitations include slow 4-bit inference and weak mathematical abilities, the researchers’ future improvements could lead to broader applications and increased accessibility in natural language processing.

Source: https://the-decoder.com/guanaco-is-a-chatgpt-competitor-trained-on-a-single-gpu-in-one-day/

The Three Types Of Machine Learning Algorithms

New superbug-killing antibiotic discovered using AI – BBC News

A new antibiotic that kills some of the most dangerous drug-resistant bacteria in the world has been discovered using artificial intelligence, in a breakthrough scientists hope could revolutionize the hunt for new drugs.

TikTok is testing an in-app AI chatbot called ‘Tako’ | TechCrunch

TikTok is testing an in-app AI chatbot called ‘Tako’ designed to answer users’ questions about the platform and its features, part of the company’s wider efforts to enhance its customer service capabilities.

Nvidia stock explodes after ‘guidance for the ages’: What Wall Street is saying

Nvidia’s stock soared following what some have called a ‘guidance for the ages’, reflecting the company’s promising outlook in the tech and AI industry. Wall Street analysts are weighing in on the company’s recent developments and future potential.

Clipdrop launches Reimagine XL — Stability AI

Clipdrop, an augmented reality app, has launched a new feature called ‘Reimagine XL’. This AI-powered tool allows users to bring objects from the real world into digital environments with improved precision and stability.

How to use Google’s AI Search Generative Experience

Google’s AI Search Generative Experience is a new feature that leverages artificial intelligence to provide more accurate and nuanced search results. This guide provides an overview of the feature and instructions on how to use it effectively.

Democratic Inputs to AI

OpenAI outlines its vision for allowing public influence over AI systems’ rules, as part of its commitment to ensuring that access to, benefits from, and influence over AI and AGI are widespread.

OpenAI Could Quit Europe Over New AI Rules, CEO Altman Warns | Time

OpenAI’s CEO Sam Altman has warned that the organization could stop operating in Europe if proposed AI regulations are implemented, reflecting ongoing debate about the best way to manage and regulate the growth of artificial intelligence.

Latest AI Trends in May 2023: May 25th, 2023

How are scientists using AI to find a drug that could combat drug-resistant infections?

Scientists are leveraging the power of artificial intelligence (AI) to identify a potential drug that could be effective in combatting drug-resistant infections. This discovery could pave the way for significant advancements in medical treatments and the fight against antibiotic resistance.

What is the new Probabilistic AI that’s aware of its performance?

Researchers have developed a new form of probabilistic AI that can gauge its own performance levels. This advanced AI system offers potential improvements in accuracy and reliability for a variety of applications, enhancing user trust and interaction.

How are robots being equipped to handle fluids?

Robotics engineers are now working on equipping robots with capabilities to handle fluids, opening up possibilities for robots to perform more delicate tasks in various industries, including healthcare, food service, and industrial automation.

How are researchers using AI to identify similar materials in images?

Researchers have developed an AI system that can identify similar materials in images. The technology could significantly enhance materials science research, aiding in the discovery and development of new materials.

See why AI like ChatGPT has gotten so good, so fast

Energy Breakthrough – Machine Learning Unravels Secrets of Argyrodites

NVIDIA AI integrates with Microsoft Azure machine learning

Curbing the Carbon Footprint of Machine Learning

AI-powered Brain-Spine-Interface helps paralyzed man walk again

A man who suffered a motorcycle injury and was paralyzed for the last 12 years is now able to walk again, thanks to researchers combining cortical implants with an AI system that enables brain signals to translate into spinal stimuli. This research paper in Nature caught my eye so I had to do a deep dive!

Full breakdown is available here

Why is this a milestone?

-

Past medical advances have shown signals can reactive paralyzed limbs, but they’ve been limited in scope. We’ve done this with human hands, legs, and even paralyzed monkeys before.

-

This time, scientists developed a real-time system that converts brain signals into lower body stimuli. The result is that the man can now live life — going to bars, climbing stairs, going up steep ramps. They released the study after their subject used this system for a full year. This is way more than a limited scope science experiment.

-

The unlock here was powered by AI. We’ve previously talked about how AI can decode human thoughts through an LLM. Here, researchers used a set of advanced AI algos to rapidly calibrate and translate his brain signals into muscle stimuli with 74% accuracy, all with average latency of just 1.1 seconds.

What can he now do: switch between stand/sit positions, walk up ramps, move up stair steps, and more.

What’s more: this new AI-powered Brain-Spine-Interface also helped him recover additional muscle functions, even when the system wasn’t directly stimulating his lower body.

-

Researchers found notable neurological recovery in his general skills to walk, balance, carry weight and more.

-

This could open up even more pathways to help paralyzed individuals recover functioning motor skills again. Past progress here has been promising but limited, and this new AI-powered system demonstrated substantial improvement over previous studies.

Where could this go from here?

-

My take is that LLMs might power even further gains. As we saw with a prior Nature study where LLMs are able to decode human MRI signals, the power of an LLM to take a fuzzy set of signals and derive clear meaning from it transcends past AI approaches.

-

The ability for powerful LLMs to run on smaller devices could simultaneously add further unlocks. The researchers had to make do with a full-scale laptop running AI algos. Imagine if this could be done real-time on your mobile phone.

P.S. If you like this kind of analysis, the author offer a free newsletter that tracks the biggest issues and implications of generative AI tech. It’s sent once a week and helps you stay up-to-date in the time it takes to have your Sunday morning coffee.

How has AI improved your life?

I am a touring musician in a country music band. We’re completely independent, which means I pretty much have to do the whole backend . Including graphic design of all the flyers and posters merch, etc. I’m not a graphic designer by trade although it’s something I actually enjoy doing, but it’s extremely time consuming. If you want it to look right.

But now, with the help of some of these image to text AI tools, I have reduced the time I spend designing a 90%. It’s not perfect, but I spend the additional time I save, creating more music.

I know A I scares the crap out of a lot of people however, I’m getting more of my life back because of these breakthroughs. If you know any AI tools, that can help independent musicians.

How Microsoft’s AI innovations will change your life (Microsoft Keynote Key Moments)

The Microsoft 2023 keynote is out and there are some really mindblowing updates. I do not where all this will go but it’s important to be aware of the developments. So if you don’t know I will shortly summarise it here.

-

Nadella announced Windows Copilot and Microsoft Fabric, two new products that bring AI assistance to Windows 11 users and data analytics for the era of AI, respectively.

-

Nadella unveiled Microsoft Places and Microsoft Designer, two new features that leverage AI to create immersive and interactive experiences for users in Microsoft 365 apps.

-

Nadella announced that Power Platform is getting new features that will make it even easier for users to create no-code solutions. For example, Power Apps will have a new feature called App Ideas that will allow users to create apps by simply describing what they want in natural language.

If you want to know a short detail of what all happened, pls check out the post. It would be really appreciating if you do:

AI vs. “Algorithms.”: What is the difference between AI and “Algorithms”?

Artificial Intelligence (AI) and algorithms are both important aspects of computing, but they serve different functions and represent different levels of complexity.

An algorithm is a set of instructions that a computer follows to complete a task. These tasks can range from basic arithmetic to complex procedures like sorting data. Every piece of software uses algorithms to function. Essentially, an algorithm is like a recipe, detailing a list of steps that need to be taken in order to achieve a certain outcome.

AI, on the other hand, refers to a broad field of computer science that focuses on creating systems capable of tasks that normally require human intelligence. This includes things like learning, reasoning, problem-solving, perception, and language understanding. The goal of AI is to create systems that can perform these tasks autonomously.

While AI systems use algorithms as part of their operation, not all algorithms are part of an AI system. For instance, a simple sorting algorithm doesn’t learn or adapt over time, it just follows a set of instructions. Conversely, an AI system like a neural network uses complex algorithms to learn from data and improve its performance over time.

In summary, all AI uses algorithms, but not all algorithms are used in AI.

Prompt Engineering: The Ultimate Guide with All the Commands

https://danielkliewer.com/prompt-engineering-the-ultimate-guide-with-all-the-commands/

If you’re as fascinated by AI as I am, then you won’t want to miss this incredible blog post on prompt engineering. Written by AI itself, this guide is an absolute goldmine for anyone looking to dive deeper into crafting prompts that elicit mind-blowing responses from AI models.

Prompt engineering is an art that requires a deep understanding of the model’s capabilities and limitations. This article provides a step-by-step approach to help you master the craft. From starting with clear goals to utilizing relevant keywords and providing concrete examples, you’ll learn how to supercharge your prompts and unlock the true potential of AI.

But wait, there’s more! The article also delves into fine-tuning techniques, giving you the power to control output creativity, diversity, and fluency. Plus, it covers essential prompt commands and training parameters that allow you to customize and optimize the AI model’s behavior.

Trust me, folks, this is a must-read for AI enthusiasts, developers, and anyone curious about the art of prompt engineering. Don’t miss out on this ultimate guide that will revolutionize the way you interact with AI models. Happy prompt engineering!

Latest AI Trends in May 2023: May 24th, 2023

The artist using AI to turn our cities into ‘a place you’d rather live’

Will hand to hand combat even be a requirement for soldiers anymore? Will endurance even matter, or will a war 300 years from now be commandeered from an advanced PlayStation control room?

Fully automated weapons systems that are operated with no morals, no conscience, just cold calculation.

Imagine a self-driving tank, but the entire crew compartment is available for more armor, more engine, and more ammo. It has image recognition and GPS. You can give it an order of “Here’s a box made from GPS coordinates (a geofence), go in there any kill anyone with a gun”.

But, unfortunately, it could also be given a geofence and told to kill everyone and everything, and it would not be concerned about committing a war crime.

Free ChatGPT Course: Use The OpenAI API to Code 5 Projects

Generative AI Is Coming Soon To Search, PMax And Google Ads

What Is Artificial Intelligence as a Service (AIaaS)?

Nvidia teams up with Microsoft to accelerate AI efforts for enterprises and individuals

Groundbreaking QLoRA method enables fine-tuning an LLM on consumer GPUs. Implications and full breakdown inside.

Another day, another groundbreaking piece of research I had to share. This one uniquely ties into one of the biggest threats to OpenAI’s business model: the rapid rise of open-source, and it’s another milestone moment in how fast open-source is advancing.

As always, the full deep dive is available here, but my Reddit-focused post contains all the key points for community discussion.

Why should I pay attention here?

-

Fine-tuning an existing model is already a popular and cost-effective way to enhance an existing LLMs capabilities versus training from scratch (very expensive). The most popular method, LoRA (short for Low-Rank Adaption), is already gaining steam in the open-source world.

-

The leaked Google “we have no moat, and neither does OpenAI memo” calls out Google (and OpenAI as well) for not adopting LoRA specifically, which may enable the open-source world to leapfrog closed-source LLMs in capability.

-

OpenAI is already acknowledging that the next generation of models is about new efficiencies. This is a milestone moment for that kind of work.

-

QLoRA is an even more efficient way of fine-tuning which truly democratizes access to fine-tuning (no longer requiring expensive GPU power)

-

It’s so efficient that researchers were able to fine-tune a 33B parameter model on a 24GB consumer GPU (RTX 3090, etc.) in 12 hours, which scored 97.8% in a benchmark against GPT-3.5.

-

A commercial GPU with 48GB of memory is now able to produce the same fine-tuned results as the same 16-bit tuning requiring 780GB of memory. This is a massive decrease in resources.

-

-

This is open-sourced and available now. Huggingface already enables you to use it. Things are moving at 1000 mph here.

How does the science work here?

QLoRA introduces three primary improvements:

-

A special 4-bit NormalFloat data type is efficient at being precise, versus the 16-bit floats and integers which are memory-intensive. Best way to think about this is that it’s like compression (but not exactly the same).

-

They quantize the quantization constants. This is akin to compressing their compression formula as well.

-

Memory spikes typical in fine-tuning are optimized, which reduces max memory load required

What results did they produce?

-

A 33B parameter model was fine-tuned in 12 hours on a 24GB consumer GPU. What’s more, human evaluators preferred this model to GPT-3.5 results.

-

A 7B parameter model can be fine-tuned on an iPhone 12. Just running at night while it’s charging, your iPhone can fine-tune 3 million tokens at night (more on why that matters below).

-

The 65B and 33B Guanaco variants consistently matched ChatGPT-3.5’s performance. While the benchmarking is imperfect (the researchers note that extensively), it’s nonetheless significant and newsworthy.

What does this mean for the future of AI?

-

Producing highly capable, state of the art models no longer requires expensive compute for fine-tuning. You can do it with minimal commercial resources or on a RTX 3090 now. Everyone can be their own mad scientist.

-

Frequent fine-tuning enables models to incorporate real-time info. By bringing cost down, this is more possible.

-

Mobile devices could start to fine-tune LLMs soon. This opens up so many options for data privacy, personalized LLMs, and more.

-

Open-source is emerging as an even bigger threat to closed-source. Many of these closed-source models haven’t even considered using LoRA fine-tuning, and instead prefer to train from scratch. There’s a real question of how quickly open-source may outpace closed-source when innovations like this emerge.

P.S. If you like this kind of analysis, the author offers a free newsletter that tracks the biggest issues and implications of generative AI tech. It’s sent once a week and helps you stay up-to-date in the time it takes to have your Sunday morning coffee.

Superintelligence: OpenAI Says We Have 10 Years to Prepare

Sam Altman was writing about superintelligence in 2015. Now he’s back at it. In 2015 he had his blog. Today, in 2023, he has the world’s future in his hands—or does he?

In 2015, Altman wrote a two–part blog post on why we should fear and regulate superintelligence (a must-read I should say if you want to understand his vision).

After reading them, it makes sense. Altman’s message is visionary, clairvoyant even.

He was writing about superintelligence eight years ago and now he has in his hands the future of the world—and the opportunity to implement all those crazy beliefs. The cycle is closing. OpenAI’s founders say we’re entering the final phase of this journey.

The post they’ve just published echoes Altman’s words: We should be careful and afraid. The only way forward is regulation. There’s no going back. Superintelligence is inevitable.

But there’s another reading; like a self-fulfilling prophecy. Or the appearance of one.

Let me ask you this: Do you think these three months of AI progress (or six, let’s be generous and include ChatGPT’s release) warrant this change of discourse?

You can read my complete analysis for The Algorithmic Bridge here.

AiToolkit V2.0, based on YOUR feedback! (1400+ AI tools)

One-Minute Daily AI News 2023/05/24

-

Microsoft launched Jugalbandi, an AI chatbot designed for mobile devices that can help all Indians — especially those in underserved communities — access information for up to 171 government programs.

-

Elon Musk thinks AI could become humanity’s uber-nanny.

-

Google introduces Product Studio, a tool that lets merchants create product imagery using generative AI.

-

Microsoft has launched the AI data analysis platform Fabric, which enables customers to store a single copy of data across multiple applications and process it in multiple programs. For example, data can be utilized for collaborative AI modeling in Synapse Data Science, while charts and dashboards can be built in Power BI business intelligence software.

Latest AI Trends in May 2023: May 23rd, 2023

Is Meta AI’s Megabyte architecture a breakthrough for Large Language Models (LLMs)?

Meta AI’s release of the Megabyte architecture presents a significant advancement in the field of AI, specifically for Large Language Models (LLMs). This architecture enables the support of over 1 million tokens, making it a potential game changer in the scale and complexity of tasks that LLMs can handle. Some experts suggest that even OpenAI might consider adopting this architecture. Discover more about this development here.

What does Google’s new Generative AI Tool, Product Studio, offer?

Google’s Product Studio is a revolutionary Generative AI tool aimed at leveraging artificial intelligence for product design and innovation. This tool brings forth new possibilities in automating and optimizing the product development process. For a comprehensive overview of Product Studio, check out our article here.

Why does Geoffery Hinton believe that AI learns differently than humans?

Geoffery Hinton, known as the Godfather of AI, has made several observations regarding the learning mechanisms of artificial intelligence. He suggests that AI processes information and learns in a manner that is fundamentally different from human learning. This difference may dictate the trajectory of AI evolution and its potential applications. For a deeper understanding of Hinton’s perspectives, read our full report here.

What is the essence of the webinar on Running LLMs performantly on CPUs Utilizing Pruning and Quantization?

This webinar focuses on techniques to optimize the performance of Large Language Models (LLMs) on Central Processing Units (CPUs). Specifically, it discusses the benefits and application of pruning and quantization strategies. To find more about this, click here.

When will AI surpass Facebook and Twitter as the major sources of fake news?

The question of when AI might surpass social platforms like Facebook and Twitter as a primary source of fake news is a complex issue. It hinges on advancements in AI technology and its potential misuse in the creation and spread of misinformation. As of now, AI technology, while advanced, is still largely a tool that must be directed. For an in-depth discussion on this topic, refer to our full article here.

AI: Enhancing or Limiting Human Intelligence?

The impact of AI on human intelligence is a topic of ongoing debate. On one hand, AI has the potential to augment human capabilities, providing tools and insights beyond our natural abilities. On the other hand, overreliance on AI could potentially limit the development of certain human skills. To learn more about this fascinating discussion, refer to our full analysis here.

What are Foundation Models?

A Foundation Model is a large AI model trained on a very large quantity of data, often by self-supervised or semi-supervised learning. In other words: the model starts from a “corpus” (the dataset it’s being trained on) and generates outputs, over and over, checking those outputs against the original data. Foundation Models, once trained, gain the ability to output complex, structured responses to prompts that resemble human replies.

The advantage of a foundational model over previous deep learning models is that it is general, and able to be adapted to a wide range of downstream tasks.

What you need to know about Foundation Models

Foundation Models can start from very simple data – albeit vast quantities of very simple data – to build and learn very complex things. Think about how your profession is made up of many interwoven, complex and nuanced concepts and jargon: a good foundational model offers the potential to quickly and correctly answer your questions, using that vast corpus of knowledge to deliver responses in understandable language.

Some things foundation models are good at:

- Translation (from one language to another)

- Classification (putting items into correct categories)

- Clustering (grouping similar things together)

- Ranking (determining relative importance)

- Summarization (generating a concise summary of a longer text)

- Anomaly Detection (finding uncommon or unusual things)

Those capabilities could easily be a great benefit to professionals in their day-to-day work, such as reviewing large quantities of documents to find similarities, variances, and determining which are the highest importance.

What is a Large Language Model?

Large Language Models (LLMs) are a subset of Foundation Models and are typically more specialized and fine-tuned for specific tasks or domains. An LLM is trained on a wide variety of downstream tasks, such as text classification, question-answering, translation, and summarization. That fine-tuning process helps the model adapt its language understanding to the specific requirements of a particular task or application.

Large Language Models are often used for various natural language processing applications and are known for generating coherent and contextually relevant text based on the input provided. But LLMs are also subject to hallucinations, in which outputs confidently assert claims of facts that are not actually true or justified by their training data. This is not necessarily a bad thing in all cases, since it can be advantageous for LLMs to be able to mimic human creativity (like asking the LLM to write song lyrics in the style of Taylor Swift), but it is a serious concern when citing resources in a professional context. Hallucinations related to factual citations have tended to decrease as LLMs are trained more carefully both on vast, diverse data and for specific, particular tasks, and as human reviewers flag those errors.

What you need to know about Large Language Models

We already knew computers were good at manipulating data based on numbers, from Microsoft Excel to VBA to more complex databases. With LLMs, an even greater power of analysis and manipulation can be applied to unstructured data made up of words – such as legal or accounting treatises and regulations, the entire corpus of an organization’s documents, and massive, larger datasets than those.

LLMs promise to be the same force multiplier for professionals who work with words, risks, and decision-making as Excel was for professionals who work with numbers.

What is cognitive computing?

Cognitive computing is a combination of machine learning, language processing, and data mining that is designed to assist human decision-making. Cognitive computing differs from AI in that it partners with humans to find the best answer instead of AI choosing the best algorithm. The example from Deep Learning about healthcare applies here too: doctors use cognitive computing to help make a diagnosis; they are drawing from their expertise but are also aided by machine learning.

What is AutoML?

AutoML refers to the automated process of end-to-end development of machine learning models. It aims to make machine learning accessible to non-experts and improve the efficiency of experts. AutoML covers the complete pipeline, starting from raw data to deployable machine learning models. This involves data pre-processing, feature engineering, model selection, hyperparameter tuning, model validation, and prediction. The main idea is to automate repetitive tasks, which makes it possible to build models in a fraction of the time, with less human intervention.

Why is AutoML Important?

In traditional machine learning model development, numerous steps demand significant human time and expertise. These steps can be a barrier for many businesses and researchers with limited resources. AutoML mitigates these challenges by automating the necessary tasks.

Democratising Machine Learning

By automating the machine learning process, AutoML opens up the field to non-experts.

Individuals or companies that lack resources to hire data scientists can use AutoML tools to build effective models.

Efficiency and Accuracy

AutoML can analyse multiple algorithms and hyperparameters in less time than humans. This process leads to more accurate models by considering a broad array of possibilities that humans might overlook.

Fast Prototyping

AutoML supports rapid prototyping of models. Businesses can quickly implement and test models to make timely data-driven decisions.

Limitations and Future Directions

While AutoML has its advantages, it’s not without limitations. AutoML models can sometimes be a black box, with limited interpretability. Furthermore, it requires significant computational resources. It is important to understand these limitations when choosing to use AutoML.

As machine learning continues to evolve, AutoML is expected to play an increasingly significant role.

In the near future, we can expect more user-friendly interfaces, increased model transparency, and models capable of operating on larger datasets more efficiently. AutoML is just a facet of the broad and intriguing world of artificial intelligence. With advancements in technology, it’s clear that the future of AI holds numerous opportunities and breakthroughs waiting to be explored. In future articles, we’ll explore other AI terminologies such as Edge Computing, Recommender Systems, and Robotics Process Automation. Stay tuned to expand your knowledge of AI and its transformative potential in different domains. Embrace the journey into AI, where learning never stops and every step brings new discoveries and insights.

Read more at: https://yourstory.com/2023/05/ai-terminology-101-automl-demystified

Daily AI Update (Date: 5/23/2023): News from Meta, Google, OpenAI, Apple and TCS

-

Meta’s Massively Multilingual Speech (MMS) models expand speech-to-text & text-to-speech to support over 1,100 languages — a 10x increase from previous work, and can also identify more than 4,000 spoken languages — 40 times more than before.

-

Meta’s AI researchers introduce LIMA, a refined language model aiming to match the performance of GPT-4 or Bard. It is a 65B parameter LLaMa model fine-tuned with the standard supervised loss on only 1,000 carefully curated prompts and responses, without any reinforcement learning or human preference modeling.

-

Google AI research introduces XTREME-UP, a new benchmark for evaluating multilingual models focusing on under-represented languages. It emphasizes a realistic evaluation setting, including new and existing user-centric tasks and realistic data sizes beyond the few-shot setting.

-

Apple has posted dozens of job listings focused on AI, indicating that the company may be stepping up its AI efforts to transform its signature products. The roles span areas including visual generative modeling, proactive intelligence, and applied AI research.

-

TCS has announced an expanded partnership with Google Cloud to launch a new offering called TCS Generative AI. It will utilize Google Cloud’s generative AI services to create custom-tailored business solutions that help clients accelerate their growth and transformation.

-

OpenAI leaders propose an IAEA-like international regulatory body for governing superintelligent AI.

Latest AI Trends in May 2023: May 22nd, 2023

Microsoft Researchers Introduce Reprompting: An Iterative Sampling Algorithm that Searches for the Chain-of-Thought (CoT) Recipes for a Given Task without Human Intervention

Sci-fi author ‘writes’ 97 AI-generated books in nine months

Artificial Intelligence and Machine Learning

Latest AI Trends in May 2023: May 21st, 2023

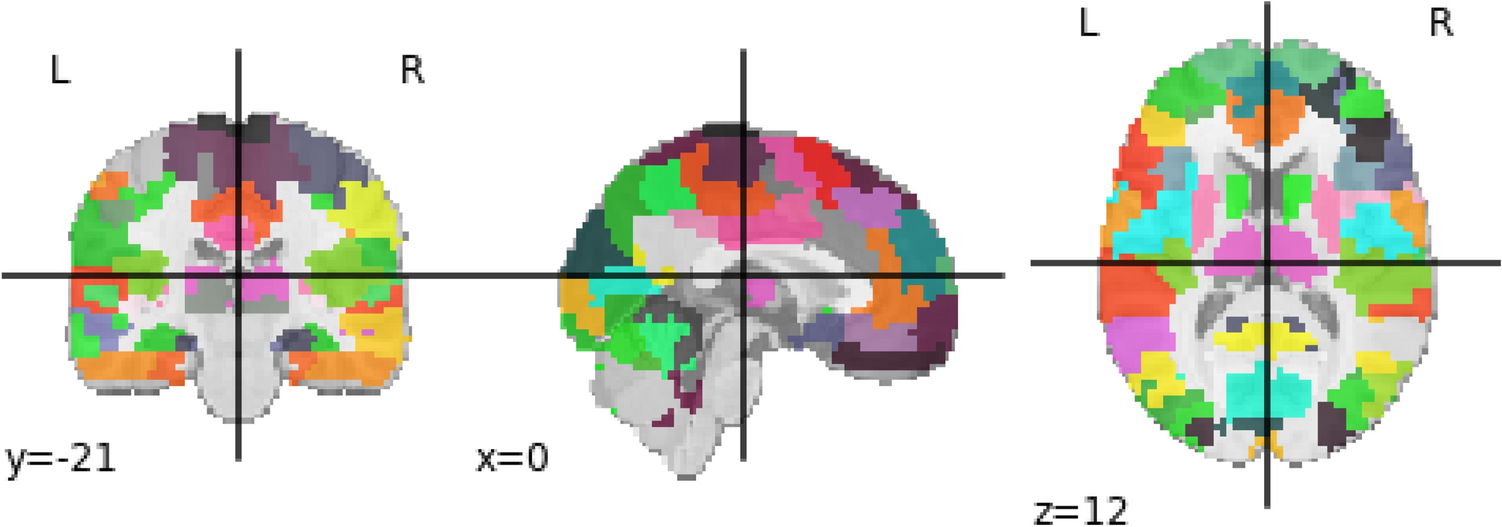

AI Deep Learning Decodes Hand Gestures from Brain Images

How To Harmonize Human Creativity With Machine Learning

How does Alpaca follow your instructions? Stanford Researchers Discover How the Alpaca AI Model Uses Causal Models and Interpretable Variables for Numerical Reasoning

Generative AI That’s Based On The Murky Devious Dark Web Might Ironically Be The Best Thing Ever, Says AI Ethics And AI Law

Daily AI Update (Date: 5/22/2023)

-

A groundbreaking method called Mind-Video has been developed to reconstruct continuous visual experiences in videos using brain recordings. This innovative approach achieves high-quality video reconstruction with various frame rates by combining masked brain modeling, multimodal contrastive learning, and augmented Stable Diffusion.

-

Microsoft’s Bing introduces new features and improvements, including chat history, charts and visualizations, export options, video overlay, optimized recipe answers, share fixes, improved auto-suggest quality, and privacy enhancements in the Edge sidebar. These updates enhance the user experience, making search more efficient and user-friendly.

-

The next iteration of Perplexity has arrived: The interactive AI search companion, Copilot enhances your search experience by providing personalized answers through interactive inputs, leveraging the power of GPT-4.

-

RoboTire has developed an AI-powered robot that can change a set of 4 wheels in approximately 23 minutes in the U.S., twice as fast as a human technician. The system aims to improve efficiency, reduce labor costs, and address labor shortages.

-

MS Artificial Nose – An intelligent device that identifies smells with a simple gas sensor and a micro-controller.

-

AI-generated image of Pentagon explosion causes market drop.

-

Intel on Monday provided a handful of new details on a chip for AI computing it plans to introduce in 2025 as it shifts strategy to compete against Nvidia and AMD.

-

Bill Gates says top AI agents will replace search and shopping sites.

-

AI predicts the function of enzymes: An international team including bioinformaticians from Heinrich Heine University Düsseldorf (HHU) developed an AI method that predicts with a high degree of accuracy whether an enzyme can work with a specific substrate.

-

‘Deepfake’ scam in China fans worries over AI-driven fraud. A fraud in northern China that used sophisticated “deepfake” technology to convince a man to transfer money to a supposed friend has sparked concern about the potential of artificial intelligence (AI) techniques to aid financial crimes.

Governing AI-Ghosts

One of the topics in AI i’m most interested in is mimetic AI — which are systems that mimic human behavior in the style of a specific human, imagine personal assistants trained on your behavior, or art generators trained on your art, or clones of your voice — that continue to mimic you after you’re dead.

Examples of this are already plenty: a synthetic voiceover by the deceased chef Anthony Bourdain caused a global stirr one year ago, the illustration style of artist Kim Jung-Gi was immediately used by a fan to train a Stable Diffusion-model after his death, Muhammad Ahmed developed an AI chatbot in his image for his grandkids he would never meet, recently Sony used an AI-clone of the dead voice actor Kenji Utsumi for an audiobook, Tom Hanks just said that he very well might appear in movies after he’s dead, a viral piece for the SF chronicle told the story of the Jessica Simulation, in which a guy resurrected his dead girlfriend as a chatbot.

Also, i just learned that there is a subset of this particular application of AI-tech called Grief Technology, and there is actually a company called AI seance offering an “AI-generated Ouija board for closure“, as they call it.

I think this last example in particular is horrible and has important implications on mental health. Grief is a psychological process, in which you learn to accept loss. It’s a deeply personal process i went through twice, and both times were different, and always challenging. Creating an artificial illusion of continuity of a loved one after their death will disrupt this process, which every single human on earth will go through multiple times in their lifes. The consequences are potentially catastrophic for our mental health and it’s not stopping there.

A new paper intriguingly titled Governing Ghostbots discusses exactly these implications, and it goes into territory even i didn’t think about: What happens when you train a sexbot on your partner and then she dies? Is continuing that virtual sex-fetish “extreme pornography as involving necrophilia“ and deemed illegal per se then? The paper also speaks about the legal aspects of such a ghostbot being harmful to the deceased’s antemortem persona, at least in germany, there are laws against that called ‘Verunglimpfung des Andenkens Verstorbener‘, translating to ‘disparagement of the memory of the deceased’.

Expensive gimmicks like concerts of deceased popstars “performing“ as holograms on stage like Tupac, Whitney Huston or Michael Jackson introduced ethical debates about post-mortem privacy ten years ago, and now, AI-systems open similar tech to everyone, where you can simply build an open source AI-chatbot of your dead grandma, synch it with an animated avatar and make her say whatever on your phone. Do we really want that? Would she approve? But what about being able to make a virtual post mortem memorial where she dances on stage in the style of her most beloved artist, singing her favorite song?

Will we all be right back and will you join me in the club at San Junipero?

And while i don’t think we’ll see conscious AI-systems anytime soon or even in my lifetime, just for the sake of the argument: What if we train future AI-systems on real people, they die, and the system gains consciousness or something similar? Then what?

These are philosophical questions related to the Teletransportation paradox explored by Stanislaw Lem in his Dialogs, in one of which he talks about a teleporting machine that effectively kills you in one location while constructing a replica of yourself, atom by atom, in another place. Is that a true continuation of yourself? We can’t know, and we are building digital systems that can perform something that resembles this replication process now.

Finding out about those psychological questions will be one of the most interesting aspects of this technology, extending our philosophical understanding of who we are.

How can we expect aligned AI if we don’t even have aligned humans?

When we talk about AI alignment, we envision designing artificial intelligence that behaves in a way that aligns with human values and goals. But isn’t it fair to ask whether we, as humans, have even been successful in aligning ourselves?

Throughout history, humans have disagreed about almost everything – from politics to religious beliefs, from ethical principles to personal preferences. We’ve not been able to fully ‘align’ on universally acceptable definitions for concepts like ‘good,’ ‘right,’ or ‘justice.’ Even on basic issues, like climate change, we find a vast array of contrasting perspectives, even though the scientific consensus is overwhelmingly one-sided.

It seems we are demanding a degree of alignment from AI that we’ve been unable to achieve amongst ourselves.

What do you all think? Does the persistent discord among humans undermine the idea of perfect AI alignment? If so, how should we approach AI development, and what are the best ways to ensure that AI benefits all of humanity?

Latest AI Trends in May 2023: May 19th, 2023

Is AI vs Humans really a possibility?

According to the I nternet, 50% say the chance of that happening is extremely significant; even 10-20% is very significant probability.

I know there is a lot of misinformation campaigns going on with use of AI such as deepfake videos and whatnot, and that can somewhat lead to destructive results, but do you think AI being able to nuke humans is possible?

Answer:

AI will never “nuke humans”. Let’s be clear about this: The dangers surrounding AI are not inherent to AI. What makes AI dangerous is people.

We need to be concerned about people in positions of power wielding or controlling these tools to exploit others, and we need to be concerned about the people building these tools simply getting it wrong and developing something without sufficient safety built in, or being misaligned with humanity’s best interests.

Rebuke:

That’s what’s happening already and has been gradually increasing for a long time. What is going to occur is a situation where greater than human intelligence will be created which no one will be able to “use” because they won’t be able to understand what it’s doing. Being concerned about bias in a language model is just like being concerned with bias in a language, which is something we’re already dealing with and a problem people have studied. Artificial intelligence is beyond this. It won’t be used by people against other people. Rather, people will be compelled to use it.

We’ll be able to create an AI which is demonstrably less biased than any human and then in the interest of anti-bias (or correct medical diagnoses, or reducing vehicle accidents), we will be compelled to use it because otherwise we’ll just be sacrificing people for nothing. It won’t just be an issue of it being profitable, it’ll be that it’s simply better. If you’re a communist, you’ll also want an AI running things just as much as a capitalist does.

Even dealing with this will require a new philosophical understanding of what humanism should be. Since humanism was typically connected to humans’ rational capability, and now AI will be superior in this capability, we will be tempted to embrace a reactionary, anti-rational form of humanism which is basically what the stated ideology of fascism is.

Exactly how this crisis unfolds won’t be like any movie you can imagine, though parts may be as some things already happening are. But it’ll be just as massive and likely catastrophic as what your imagining.

How much has AI developed these days

How to Pass and Renew Azure Artificial Intelligence Engineer (AI-102) Certificate

In this article, we will discuss Azure Artificial Intelligence Engineer certification. As cloud computing grows, more services are being offered which include artificial intelligence.

Microsoft Azure is one of the leading cloud computing platforms that offer hundreds of services to customers, especially enterprises ranging from cloud infrastructure to big data and artificial intelligence. Microsoft Azure offers comprehensive end-to-end services that are appealing to most organizations.

Microsoft Azure offers a wide variety of cloud certifications including Azure Artificial Intelligence certification. There are now thirteen Microsoft Azure Certifications divided into three levels which are Fundamental, Associate and Expert.

The certifications for Azure Artificial Intelligence have Fundamental and Associate levels only. For the Fundamental level, it’s known as AI-900 or Exam AI-900: Microsoft Azure AI Fundamentals and the Associate level is known as AI-102 or Exam AI-102: Designing and Implementing a Microsoft AI Solution.